Continuing our efforts in adding support for the latest generation of Azure Virtual Machines, Instaclustr is pleased to announce support for the D15v2 and L8v2 types with local storage support for Apache Cassandra clusters on the Instaclustr Managed Platform. So let’s begin by introducing the two forms of storage.

Local storage is a non-persistent (i.e. volatile) storage that is internal to the physical Hyper-V host that a VM is running on at any given time. As soon as the VM is moved to another physical host as a result of a deallocate (stop) and start command, hardware or VM crash, or Azure maintenance, the local storage is wiped clean and any data stored on it is lost.

Remote storage is persistent and comes in the form of managed (or unmanaged) disks. These disks can have different performance and redundancy characteristics. Remote storage is generally used for any VM’s OS disk or data disks that are intended to permanently store important data. Remote storage can be detached from the VM from one physical host to another in order to preserve the data. It is stored on Azure’s storage account which VMs use to attach it via the network. The physical Hyper-V host running the VM is independent of the remote storage account, which means that any Hyper-V host or VM can mount a remotely stored disk on more than one occasion.

Remote storage has the critical advantage of being persistent, while local storage has the advantage of being faster (because it’s local to the VM—on the same physical host) and included in the VM cost. When starting an Azure VM you only pay for its OS and disks. Local storage on a VM is included in the price of the VM and often provides a much cheaper storage alternative to remote storage.

The Azure Node Types

D15_v2s

D15_v2s are the latest addition to Azure’s Dv2 series, featuring more powerful CPU and optimal CPU-to-memory configuration making them suitable for most production workloads. The Dv2-series is about 35% faster than the D-series. D15_v2s are a memory-optimized VM size offering a high memory-to-CPU ratio that is great for distributed database servers like Cassandra, medium to large caches, and in-memory data stores such as Redis.

Instaclustr customers can now leverage these benefits with the release of the D15_v2 VMs, which provide 20 virtual CPU cores and 140 GB RAM backed with 1000 GB of local storage.

L8_v2s

L8_v2s are a part of the Lsv2-series featuring high throughput, low latency, and directly mapped local NVMe storage. L8s like the rest of the series were designed to cater for high throughput and high IOPS workloads including big data applications, SQL and NoSQL databases, data warehousing, and large transactional databases. Examples include Cassandra, Elasticsearch, and Redis. In general, applications that can benefit from large in-memory databases are a good fit for these VMs.

L8s offer 8 virtual CPU cores and 64 GB in RAM backed by local storage space of 1388 GB. L8s provide roughly equivalent compute capacity (cores and memory) to a D13v2 at about ¾ of the price. This offers speed and efficiency at the cost of forgoing persistent storage.

Benchmarking

Prior to the public release of these new instances on Instaclustr, we conducted Cassandra benchmarking for:

| VM Type | ||||

| CPU Cores | RAM | Storage Type | Disk Type | |

| DS13v2 | 8 | 56 GB | remote | 2046 GiB (SSD) |

| D15v2 | 20 | 140 GB | local | 1000 GiB (SSD) |

| L8s w/local | 8 | 64 GB | local | 1388 GiB (SSD) |

| L8s w/remote | 8 | 64 GB | remote | 2046 GiB (SSD) |

All tests were conducted using Cassandra 3.11.6

The results are designed to outline the performance of utilizing local storage and the benefits it has over remote storage. Testing is split into two groups, fixed and variable testing. Each group runs two different sets of payload:

- Small—Smaller more frequent payloads which are quick to execute but are requested in large numbers.

- Medium—Larger Payloads which take longer to execute and are potentially capable of slowing Cassandra’s ability to process requests

The fixed testing procedure is:

- Insert data to fill disks to ~30% full.

- Wait for compactions to complete.

- Run a fixed rate of operations

- Run a sequence of tests consisting of read, write, and mixed read/write operations.

- Run the tests and measure the operational latency for a fixed rate of operations.

The variable testing procedure is:

- Insert data to fill disks to ~30% full.

- Wait for compactions to complete.

- Target a median latency of 10ms.

- Run a sequence of tests comprising read, write, and mixed read/write operations. These tests were broken down into multiple types.

- Run the tests and measure the results including operations per second and median operation latency.

- Quorum consistency for all operations.

- We incrementally increase the thread count and re-run the tests until we have hit the target median latency and are under the allowable pending compactions.

- Wait for compactions to complete after each test.

As with any generic benchmarking results, the performance may vary from the benchmark for different data models or application workloads. However, we have found this to be a reliable test for comparison of relative performance that will match many practical use cases.

Comparison

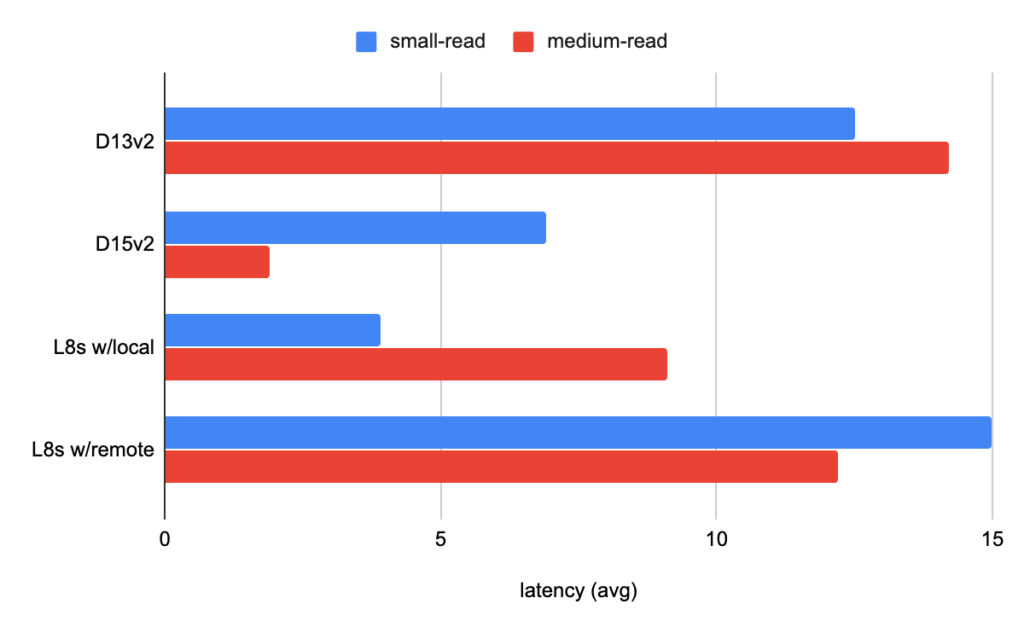

When comparing performance between instance types with local and remote storage it is important to take note of the latency of the operations. The latency of read operations indicate how long a request takes to retrieve information from the disk and return it back to the client.

In the fixed small and medium read tests, local storage offered better latency results in comparison to instances with remote storage. This is more noticeable in the medium read tests, where the tests had a larger payload and required more interaction with the locally available disks. Both L8s (with local storage) and D15_v2s offered a lower latency to match the operation rate given to the cluster.

When running variable-small-read tests under a certain latency, the operation rate for D15v2 reached nearly 3 times the number of ops/s for D13v2s with remote storage. Likewise L8s with local storage outperformed L8s (with remote storage). L8s (with local storage) had twice the performance and half the latency of the L8s (with remote storage).

| variable-read-small | L8s w/local | L8s w/remote | DS13_v2 | DS15_v2 |

| Operation Rate | 19,974 | 7,198 | 23,985 | 61,489 |

| Latency mean (avg) | 22.1 | 48.3 | 21.9 | 19.4 |

| Latency medium | 20.1 | 40.3 | 19.9 | 17.7 |

| Latency 95th percentile | 43.6 | 123.5 | 43.3 | 39.2 |

| Latency 99th percentile | 66.9 | 148.9 | 74.3 | 58.9 |

In the medium read tests, instances with local storage outperformed instances with remote storage. Both L8s (with local storage) and D15s had a far better ops/s and latency result, even with a significantly higher operation rate. This makes a very convincing argument for local storage over remote storage when seeking optimal performance.

| variable-read-medium | L8s w/local | L8s w/remote | DS13_v2 | DS15_v2 |

| Operation Rate | 235 | 76 | 77 | 368 |

| Latency mean (avg) | 4.2 | 13 | 12.9 | 2.7 |

| Latency medium | 3.2 | 8.4 | 9.5 | 2.5 |

| Latency 95th percentile | 4.9 | 39.7 | 39.3 | 3.4 |

| Latency 99th percentile | 23.5 | 63.4 | 58.8 | 4.9 |

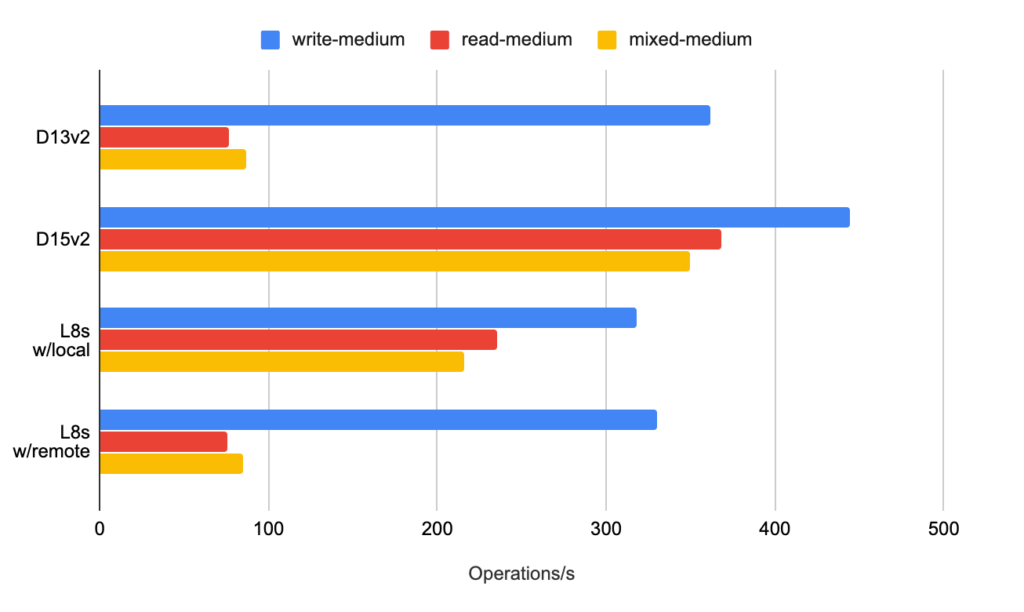

Looking at the writes, on the other hand, D15s outperformed due to their large pool of CPU cores. While differences were less obvious in the small tests, results were more obvious in the medium tests. Further investigation will be conducted to determine why this is the case.

Based on the graph for variable medium testing, D15 outperformed in all categories. D15v2s have both a larger number of cores to outpace competition with heavy loads of writes and local storage offering faster disk intensive reads. This was additionally supported by strong performance in the mixed medium testing results.

L8s with local storage took second place, performing better than DS13v2s in the read and mixed tests. Whilst DS13v2 nodes slightly edged out L8s in writes for larger payloads. The mixed results showed a substantial difference in performance between the two with L8s taking a lead thanks to local storage providing faster disk intensive reads.

Conclusion

Based on the results from this comparison, we find that local storage offers amazing performance results for disk-intensive operations such as reads. D15v2 nodes, with their large number of cores to perform CPU intensive writes and local storage to help with disk-intensive reads, offer top tier performance for any production environment.

Furthermore, L8s with local storage offer a great cost-efficient solution at around ¾ of the price of D13v2s and offer a better price-performance gain notably in read operations. This is especially beneficial for a production environment that prioritizes reads overwrites.

In order to assist customers in upgrading their Cassandra clusters from currently used VM types to D15v2s or L8 VM type nodes, Instaclustr technical operations team has built several tried and tested node replacement strategies to provide zero-downtime, non-disruptive migrations for our customers. Reach out to our support team if you are interested in these new instance types for your Cassandra cluster.

You can access pricing details through the Instaclustr Console when you log in, or contact our Support team.