What Are Agentic AI Frameworks?

Agentic AI frameworks are software toolkits that simplify the creation of autonomous AI agents, providing developers with pre-built components for tasks like perception, reasoning, action, and memory management to build complex, goal-driven systems.

Popular frameworks include CrewAI, LangGraph, AutoGen, LlamaIndex, AutoAgent, DSPy, Haystack, and Microsoft Semantic Kernel, which offer varying capabilities for single-agent and multi-agent orchestration, tool integration, and data retrieval. These frameworks simplify AI development by abstracting complexity, enabling rapid prototyping, and scaling from simple to sophisticated autonomous applications.

Agentic frameworks act as blueprints and toolkits for constructing AI agents; systems designed to perceive, reason, and act to achieve specific goals autonomously. They provide developers with the necessary building blocks to create intelligent agents that can interact with external systems, make decisions, and manage context over time.

Editor’s note: Updated information about agentic AI frameworks to reflect features and capabilities in 2026, and added two new tools.

In this article:

- Benefits of Agentic AI Frameworks

- Notable Agentic AI Frameworks

- How to Choose the Right Agentic AI Framework

Benefits of Agentic AI Frameworks

Agentic AI frameworks offer a range of advantages for building intelligent, autonomous systems that can operate in complex environments. Their structured approach to agent design and task execution enables more flexible and maintainable AI applications.

- Task decomposition and autonomy: Agents can break down complex goals into smaller tasks, make decisions independently, and execute actions without constant human input.

- Built-in memory and context handling: Persistent memory lets agents retain relevant information across sessions, improving coherence, personalization, and long-term task performance.

- Multi-agent coordination: Frameworks support communication and collaboration among multiple agents, enabling parallel task execution and specialization.

- Tool and API integration: Agents can be connected to external tools, APIs, and databases, allowing them to interact with real-world systems and perform actions beyond language generation.

- Modularity and reusability: Agent behaviors, tools, and memory modules are often designed as reusable components, accelerating development and simplifying maintenance.

- Support for iteration and self-correction: Agents can evaluate outcomes, revise plans, and retry failed steps, making them more resilient in dynamic or uncertain environments.

- Scalable architecture: Many frameworks provide infrastructure for managing large agent systems, supporting use cases from personal assistants to enterprise-level automation.

The Importance of Scalable, Efficient, and Reliable Data Infrastructure

Agentic AI frameworks are only as powerful as the data infrastructure supporting them. While the agents themselves provide the reasoning and autonomy, they rely heavily on a continuous flow of high-quality data to perceive their environment, learn from context, and execute decisions. Without a robust backend, even the most sophisticated agents can face bottlenecks, memory loss, or latency issues that cripple their performance.

This is where proven open source technologies become the unsung heroes of AI development. Tools like Apache Kafka®, Apache Cassandra®, PostgreSQL®, OpenSearch®, and ClickHouse® provide the critical foundation needed for scalable, efficient, and reliable agentic operations.

Enabling real-time data ingestion and processing

Autonomous agents often operate in dynamic environments where seconds matter. Whether it’s a customer service bot handling thousands of concurrent requests or a supply chain agent reacting to shipping delays, real-time data ingestion is non-negotiable.

Apache Kafka serves as the central nervous system for these high-velocity data streams. It allows agents to subscribe to real-time events, ensuring they always have the most current information to act upon. By decoupling data producers from consumers, Kafka ensures that agents can process streams of information—like sensor data or user interactions—without overwhelming the system, maintaining high throughput even during peak loads.

Managing complex state and memory

For an agent to be truly “agentic,” it must remember past interactions and maintain context over long periods. This requires persistent storage that is both fast and reliable.

Apache Cassandra and PostgreSQL are essential for managing this stateful information. Cassandra’s distributed architecture makes it ideal for handling massive write workloads across multiple regions, ensuring agents have high availability access to their operational history. Meanwhile, PostgreSQL offers robust relational data integrity, perfect for handling structured transactional data that agents may need to query or update as part of their decision-making workflow.

Powering retrieval and analytics

Agents don’t just react; they research. Retrieval-Augmented Generation (RAG) is a core component of many frameworks, allowing agents to pull relevant knowledge from vast datasets to inform their answers.

OpenSearch plays a pivotal role here by enabling fast, scalable vector search. It allows agents to perform semantic searches across unstructured data—like documents, logs, or chat history—to find the specific context they need to ground their responses in reality.

Similarly, ClickHouse empowers agents with analytical capabilities. Its column-oriented structure allows for lightning-fast analytical queries on large volumes of data. This enables agents to perform complex data analysis tasks on the fly, such as spotting trends or generating reports, adding a layer of analytical intelligence to their autonomous actions.

By leveraging these open source technologies developers can build Agentic AI systems that are not just smart, but enterprise-ready—capable of scaling effortlessly and operating reliably in the real world.

Related content: Learn how to power AI workloads with open source

Notable Agentic AI Frameworks

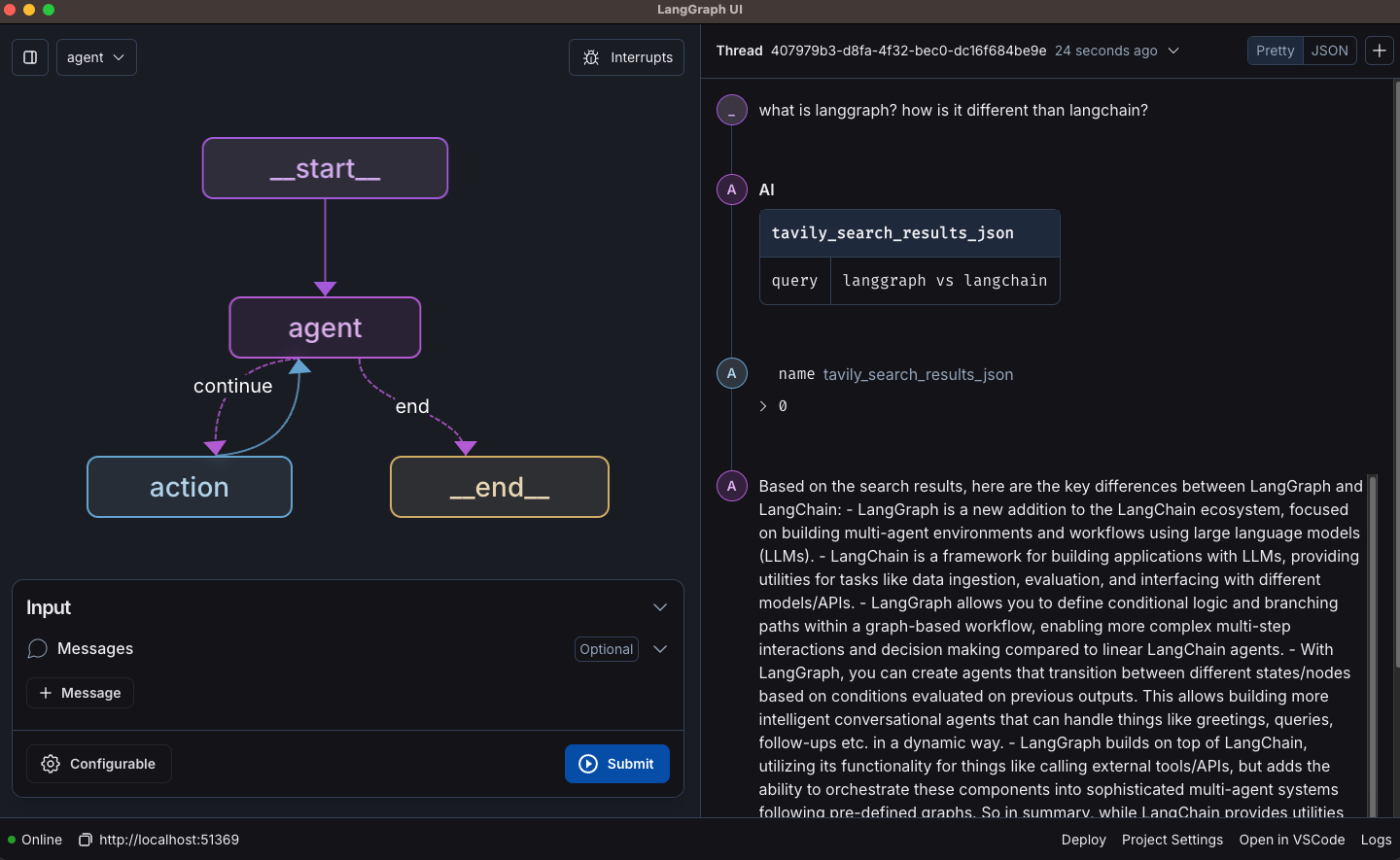

1. LangGraph

![]()

LangGraph is a framework from the LangChain ecosystem for building stateful, controllable agent workflows. It provides low-level primitives for designing single-agent and multi-agent systems with explicit control over execution flow, memory, and moderation. The framework is designed for production use cases that require transparency, human oversight, and persistent context.

Key features include:

- Human-in-the-loop controls: Insert moderation and approval steps into workflows to guide or validate agent actions.

- Custom workflow primitives: Define single-agent, multi-agent, or hierarchical control flows using flexible low-level building blocks.

- Persistent memory: Store and retrieve conversation history and contextual state across sessions.

- Stateful multi-actor support: Build applications where multiple agents coordinate while maintaining shared or isolated state.

- Real-time streaming: Stream tokens and intermediate reasoning steps to improve transparency and user experience.

- Production-oriented design: Built to support reliable, long-running agent workflows.

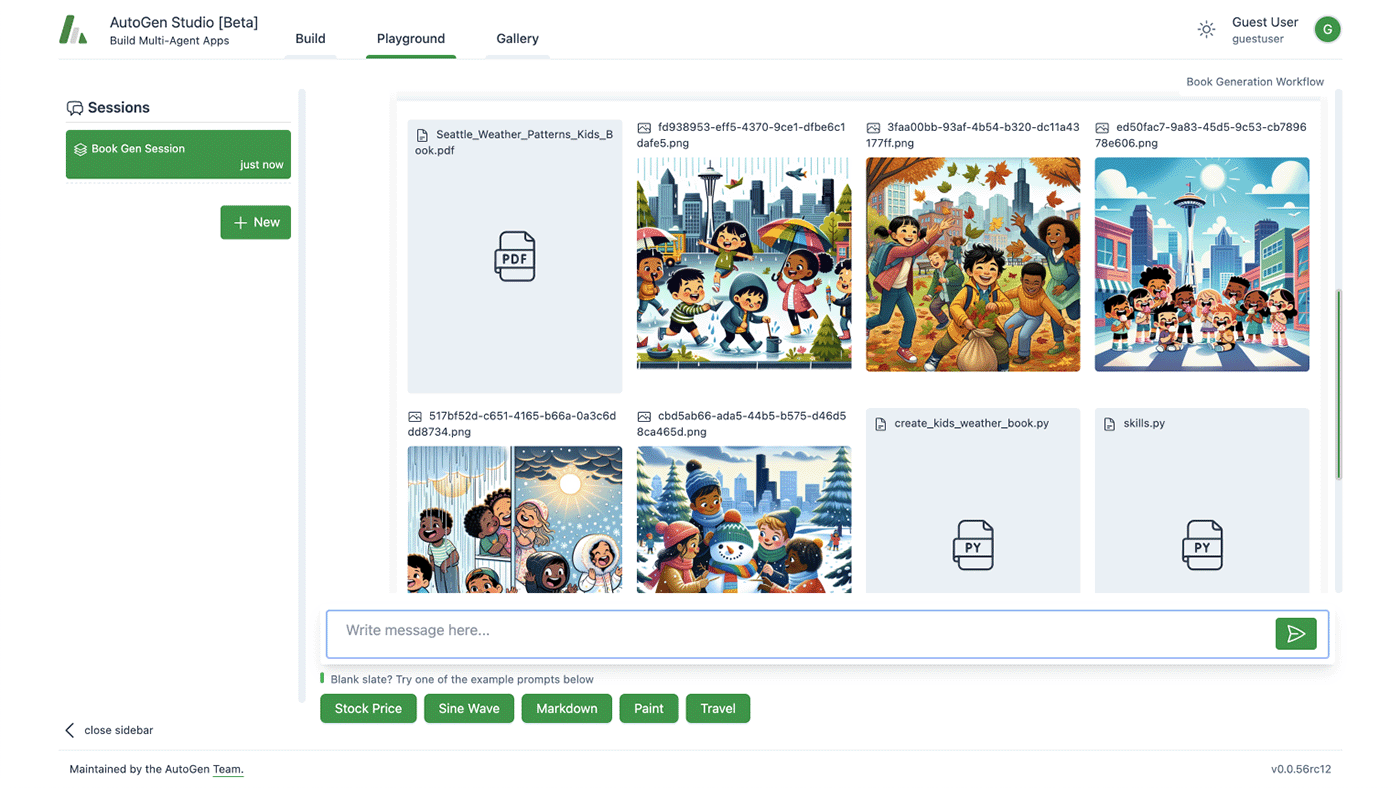

2. AutoGen

AutoGen is a framework for building conversational AI agents and multi-agent systems with layered abstractions. It supports both rapid prototyping through a web-based interface and programmatic development for scalable, event-driven agent orchestration. The framework separates concerns into Studio (no-code), AgentChat (Python API), Core (event-driven runtime), and Extensions for integrations.

Key features include:

- No-code prototyping with Studio: Web-based interface for building and testing agent workflows without writing code.

- AgentChat framework: Python API for creating conversational single-agent and multi-agent applications.

- Event-driven core runtime: Supports deterministic and dynamic workflows for scalable, distributed multi-agent systems.

- Extension ecosystem: Integrates with external services through components such as MCP servers, OpenAI Assistant API adapters, Docker-based code execution, and gRPC runtimes.

- Support for distributed agents: Enables multi-language and distributed deployment scenarios.

- Modular architecture: Separates orchestration logic, agent behavior, and integrations for flexibility and maintainability.

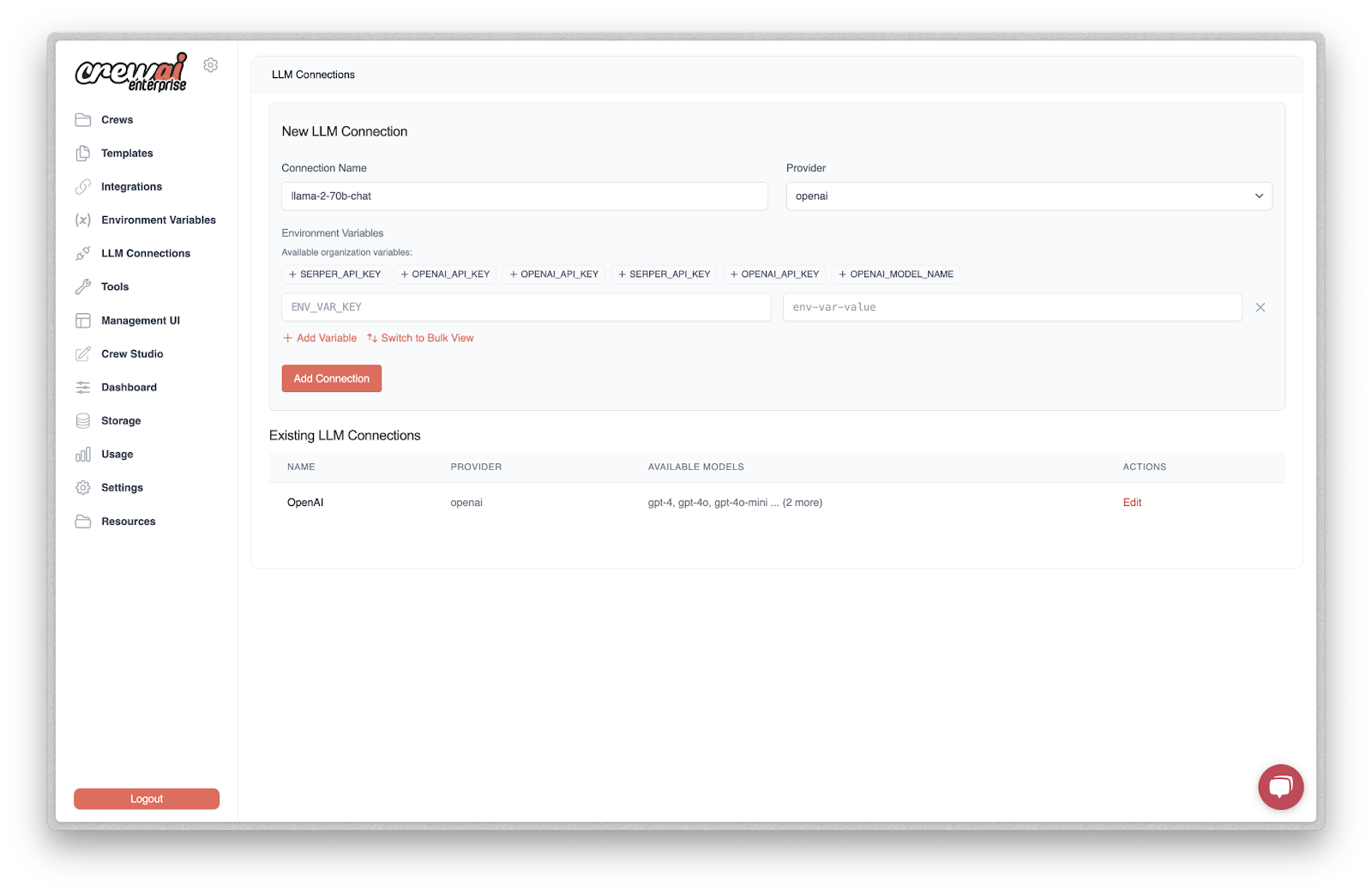

3. CrewAI

CrewAI is a framework for building and operating coordinated teams of AI agents that execute complex workflows autonomously. It supports both visual development and API-driven integration, enabling organizations to define agent roles, connect tools, and monitor performance across environments. The platform includes orchestration, observability, and scaling capabilities for enterprise deployment.

Key features include:

- Multi-agent orchestration: Coordinate teams of agents with planning, reasoning, memory, and tool usage abstractions.

- Visual editor and API support: Build agent crews using a visual interface with AI copilot assistance or programmatically via APIs.

- Tool and application integration: Connect agents to enterprise systems such as Gmail, Slack, Salesforce, and other external tools.

- Workflow tracing and observability: Track task interpretation, tool calls, and outputs with real-time tracing.

- Agent training and guardrails: Apply training workflows and guardrails to improve reliability and repeatability.

- Centralized management and scaling: Manage permissions, monitoring, and serverless scaling across cloud or on-premises environments.

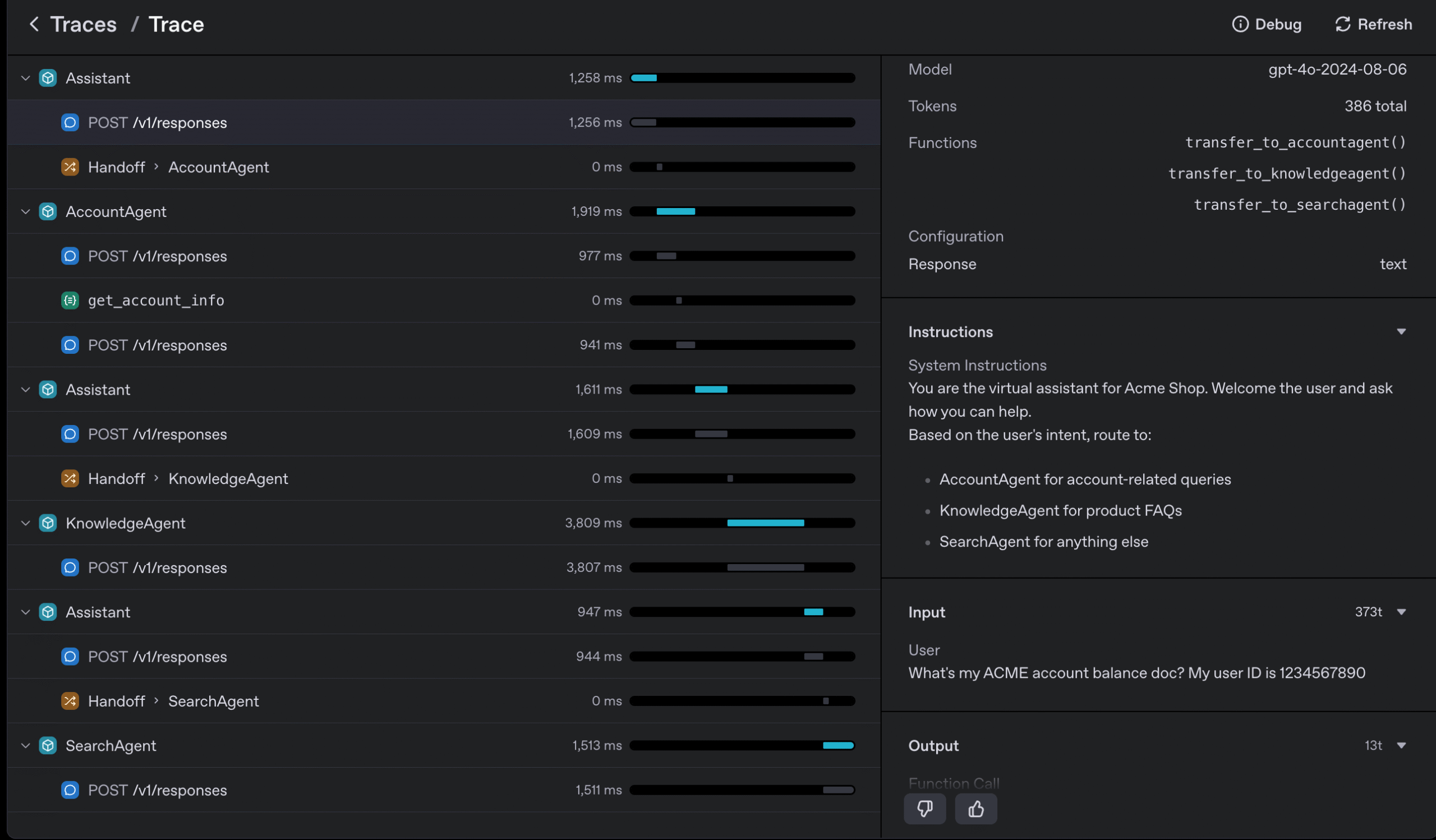

4. OpenAI Agents

OpenAI Agents provide a toolkit for building, deploying, and optimizing agent workflows using OpenAI models and platform components. Through AgentKit and Agent Builder, developers can design agent workflows that combine models, tools, memory, and control logic in a unified environment. The framework supports both visual workflow creation and SDK-based development for custom agentic applications.

Key features include:

- Visual agent builder: Create agent workflows using a canvas interface that combines models, tools, knowledge sources, and logic nodes in one place.

- Model integration: Connect agents to OpenAI models for reasoning, decision-making, and data processing within workflows.

- Tool and connector support: Integrate external services using function calling, web search, file search, connectors, and MCP-based integrations.

- Persistent knowledge and memory: Use vector stores, embeddings, and file search to provide agents with external and long-term context.

- Custom control-flow logic: Define conditional routing, multi-agent coordination, and workflow branching with logic nodes.

- SDK-based extensibility: Build agentic applications programmatically using the Agents SDK instead of the visual builder.

- Deployment with ChatKit: Embed agent workflows into product interfaces using a customizable chat component connected to the backend.

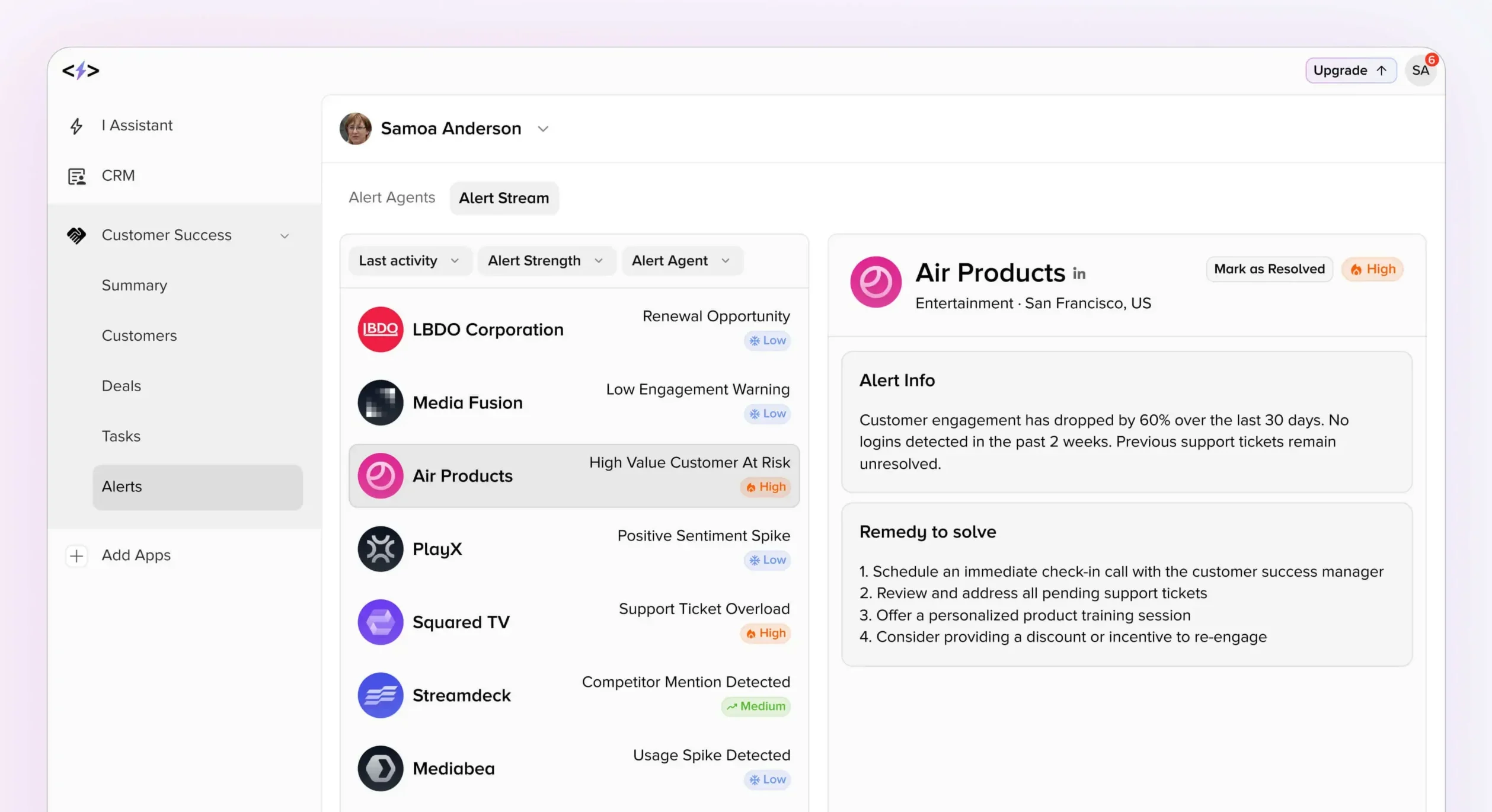

5. SuperAGI

SuperAGI is an AI-native platform that combines multiple AI-driven applications and agents into a unified system for business workflows. It focuses on embedding AI agents across functions such as sales, marketing, customer support, and operations, integrating automation, CRM, communication, and analytics into a single environment.

Key features include:

- Integrated AI-native CRM: Central system of record for contacts, companies, deals, and tasks, designed to connect with AI-driven workflows.

- AI-driven sales automation: Supports cold outreach, multi-channel sequences, lead enrichment, and signal-based prospecting.

- Workflow automation engine: Drag-and-run workflows that connect signals, outreach, tasks, and CRM updates.

- Built-in analytics and dashboards: Provides revenue, funnel, and conversation analytics with customizable dashboards.

- AI communication tools: Includes AI-assisted chat, business phone capabilities, voice agents, and automated meeting intelligence.

- Customer support and success agents: AI-powered chat and support tools for resolving, routing, and managing customer interactions.

Retrieval, integration and programmable AI Frameworks

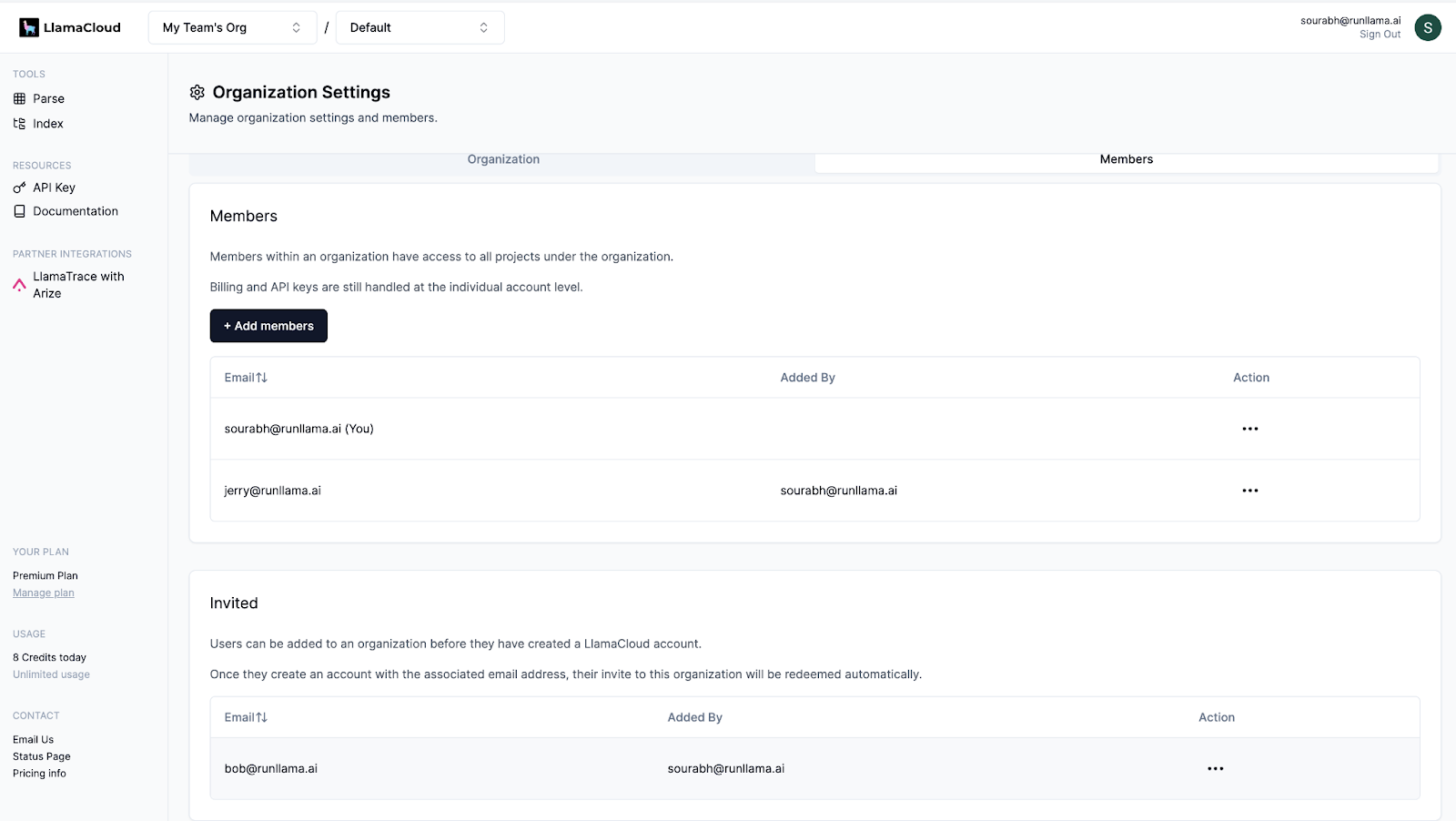

6. LlamaIndex

LlamaIndex is a developer-focused framework for building document-centric AI agents and retrieval-augmented generation (RAG) systems. It provides modular components for parsing, indexing, retrieval, memory, and workflow orchestration, enabling teams to move from raw documents to production-ready agent workflows.

Key features include:

- Document parsing: Supports 90+ unstructured file types, including complex layouts, tables, embedded images, and handwritten notes.

- Modular agent framework: Provides building blocks for state management, memory, human-in-the-loop review, and reflection.

- Event-driven workflows: Async-first workflow engine for orchestrating multi-step AI processes with looping and parallel paths.

- RAG-optimized pipelines: Designed for structured extraction, indexing, and retrieval in enterprise document workflows.

- SDK support: Python and TypeScript SDKs for embedding into existing applications.

- Third-party integrations: Connectors for LLMs, data sources, and vector databases.

7. Semantic Kernel

Semantic Kernel is a lightweight, open-source SDK for integrating AI models into C#, Python, and Java applications. It acts as middleware between language models and existing business logic, translating model outputs into executable function calls. The framework emphasizes modularity, observability, and long-term maintainability in enterprise environments.

Key features include:

- Multi-language support: Production-ready support for C#, Python, and Java with stable versioning.

- Model-to-function orchestration: Converts model-generated requests into structured function calls within existing codebases.

- Plugin architecture: Extend functionality using OpenAPI-based plugins and reusable connectors.

- Enterprise observability: Built-in telemetry, hooks, and filters to monitor and govern AI behavior.

- Future-proof model integration: Swap underlying AI models without rewriting application logic.

- Process automation support: Combine prompts with APIs to automate business workflows.

8. AutoAgent

AutoAgent is an open-source, zero-code framework for building and deploying LLM agents using natural language. It allows users to create agents, tools, and workflows through conversational interaction rather than manual configuration. The framework supports both single-agent and multi-agent systems, with built-in mechanisms for iterative self-improvement and workflow generation.

Key features include:

- Natural language agent creation: Build agents and tools entirely through conversational input.

- Zero-code workflow generation: Automatically constructs and orchestrates multi-agent workflows from high-level task descriptions.

- Self-developing architecture: Supports iterative code and workflow generation for controlled customization.

- Multi-agent collaboration: Enables coordinated agent systems for research and complex analysis tasks.

- Model flexibility: Compatible with multiple LLM providers via configurable backends.

- CLI-based deployment: Provides command-line interfaces and containerized environments for execution.

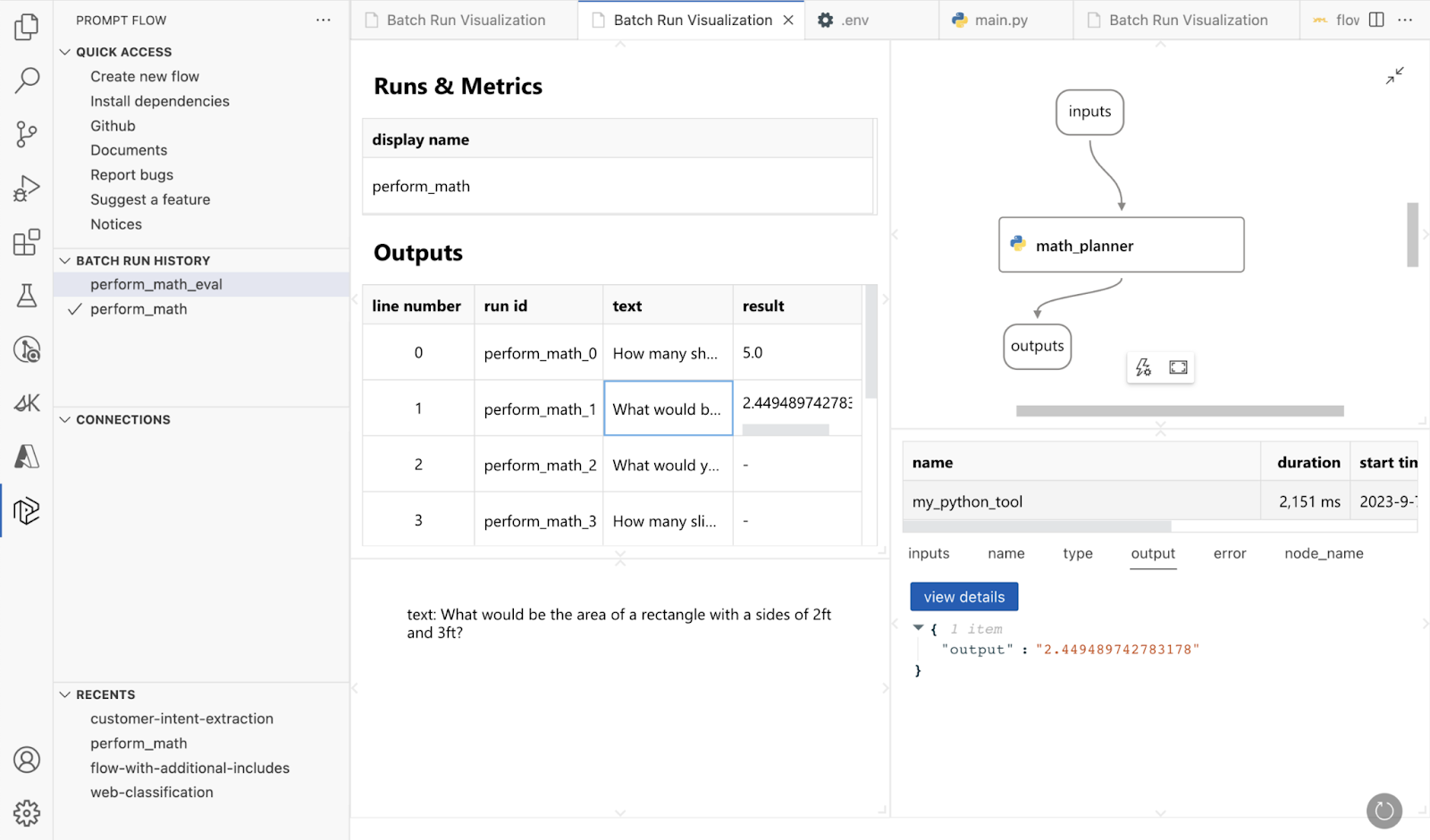

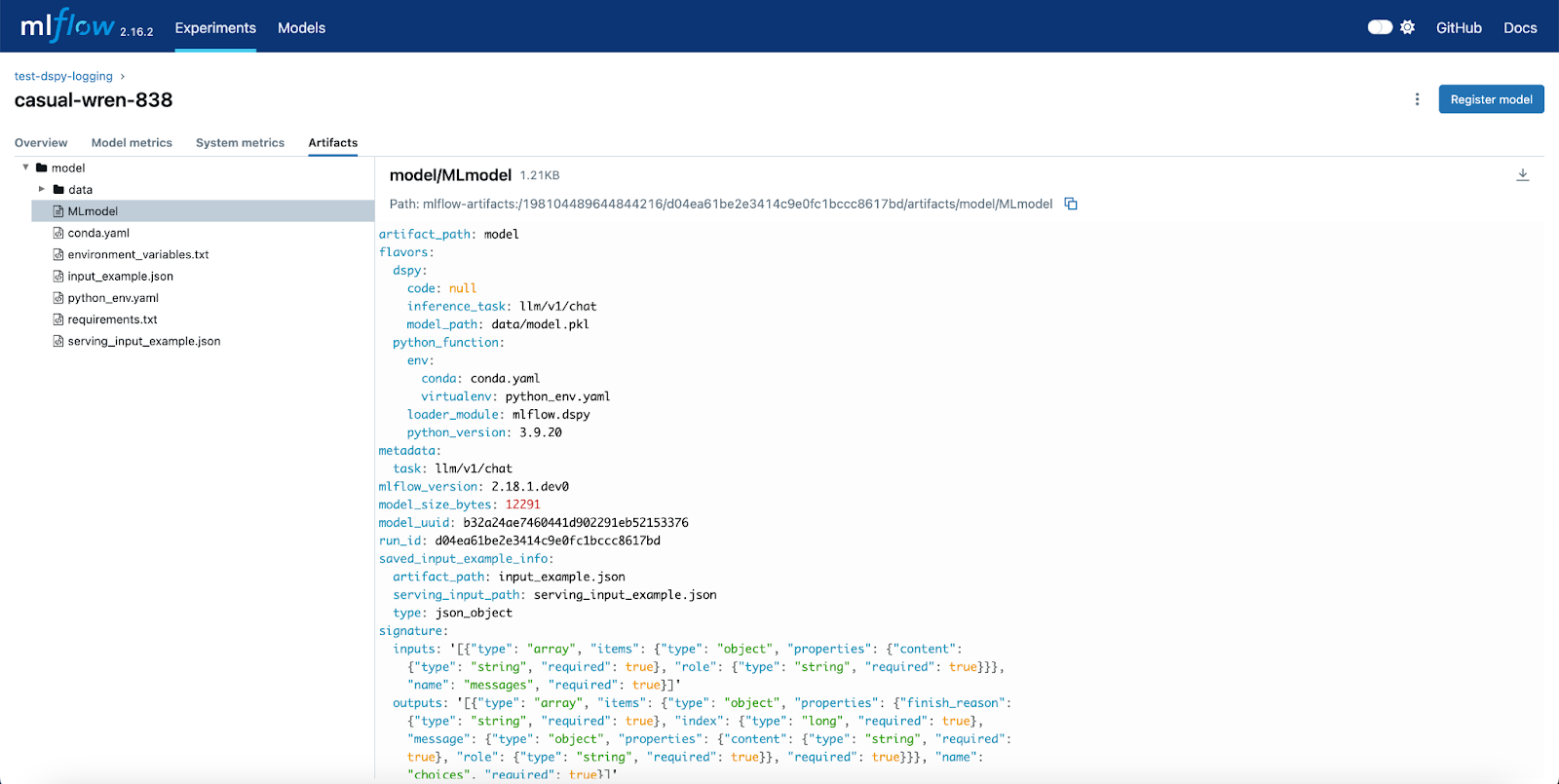

9. DSPy

DSPy (Declarative Self-improving Python) is a declarative framework for building modular AI systems using structured programming instead of manual prompt engineering. Developers define input-output signatures and compose modules such as prediction, reasoning, or agent loops. DSPy compiles these high-level definitions into optimized prompts and, optionally, fine-tuned model weights.

Key features include:

- Declarative AI programming: Define AI behavior through typed input/output signatures rather than handcrafted prompts.

- Composable modules: Use modules such as

Predict,ChainOfThought, andReActto build multi-stage pipelines and agents. - Model-agnostic design: Swap underlying language models without rewriting system logic.

- Prompt and weight optimization: Built-in optimizers generate few-shot examples, refine instructions, or fine-tune weights.

- Support for complex pipelines: Build RAG systems, agent loops, and multi-stage workflows as structured programs.

Optimization tooling: Compile AI programs against task-specific metrics to improve performance.

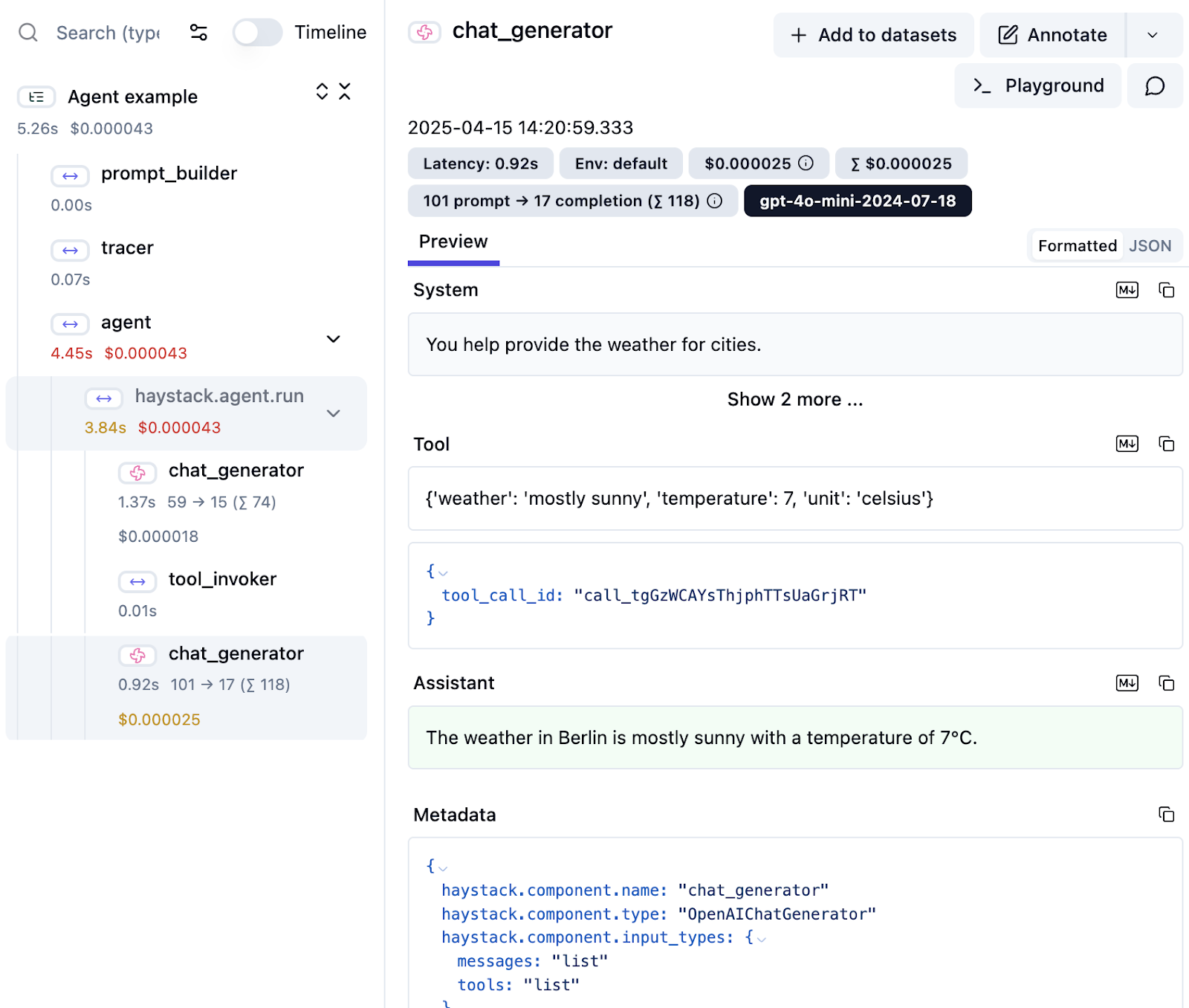

10. Haystack

Haystack is an open-source AI orchestration framework for building production-ready agents, RAG systems, and context-engineered applications. It provides modular pipelines that give developers visibility into retrieval, reasoning, memory, and tool usage. Haystack supports flexible integration with a broad AI ecosystem.

Key features include:

- Modular pipeline architecture: Orchestrate retrieval, reasoning, memory, and tool use with transparent, inspectable components.

- Broad ecosystem integration: Connect to models, vector databases, and search engines without vendor lock-in.

- Production-ready tooling: Use the same composable components from prototype to deployment.

- Enterprise-scale deployment: Serializable, cloud-agnostic pipelines with logging and monitoring support.

- Context engineering support: Design and control how context is retrieved and passed through agent workflows.

Related content: Read our guide to agentic AI tools (coming soon)

Tips from the expert

David vonThenen

Senior AI/ML Engineer

As an AI/ML engineer and developer advocate, David lives at the intersection of real-world engineering and developer empowerment. He thrives on translating advanced AI concepts into reliable, production-grade systems all while contributing to the open source community and inspiring peers at global tech conferences.

In my experience, here are tips that can help you better apply and optimize agentic AI frameworks for scalable, autonomous systems:

- Prototype agents using synthetic tasks before real integration: Before connecting agents to real APIs or sensitive data, design synthetic environments to simulate goals, failure modes, and tool usage. This helps refine planning logic and test agent robustness under controlled conditions.

- Design tool wrappers with explicit preconditions and postconditions: Tools exposed to agents should declare what they need (inputs) and guarantee (outputs). This prevents agents from misusing tools and improves interpretability, composability, and automated debugging of workflows.

- Build shared memory abstractions for multi-agent collaboration: Instead of duplicating memory modules per agent, create shared, versioned memory stores (e.g., vector + relational) with scoped access. This allows agents to reason jointly while maintaining separation of responsibilities.

- Use role archetypes to constrain agent behavior in multi-agent setups: Define role-based personas like “Planner,” “Researcher,” or “Reviewer,” each with limited tools, memory access, and goals. This minimizes errors, supports explainability, and aligns agents with human-readable workflows.

Log intermediate reasoning chains and decisions for traceability: Instrument all agent decisions (tool choice, thought steps, memory updates) with structured logs or metadata. This is essential for auditing, debugging, and training future self-improving agents via offline RL or fine-tuning.

How to Choose the Right Agentic AI Framework

Here are some of the factors that organizations should consider when choosing an agentic AI framework.

1. Assess Autonomy, Planning and Memory Features

When choosing an agentic AI framework, start by evaluating its support for autonomy, planning, and memory management. Some frameworks emphasize task decomposition, long-term memory, and self-correction, which enable agents to handle complex, multi-stage processes with minimal oversight. Assess whether the framework offers abstractions for persistent context, stateful interactions, and the ability for agents to reason over time, as these are crucial for most real-world applications.

Additionally, consider the flexibility of the planning mechanisms: can agents dynamically react to new information or environmental changes? Frameworks that support fine-grained control over memory, context, and planning logic ensure that AI agents remain effective and reliable as task complexity grows. Review documentation, inspect example architectures, and test out sample agents to validate these capabilities before making a commitment.

2. Performance Under Scale

Performance at scale is key when deploying agentic AI solutions in production. Some frameworks are optimized for prototyping but may falter under high concurrency, large user bases, or heavy data processing loads. Review performance benchmarks, parallelization strategies, and scalability features such as distributed orchestration or asynchronous execution. Good frameworks allow agents to operate efficiently even in demanding, real-world environments.

It’s also important to understand how a framework handles resource allocation and system bottlenecks. Scalable agentic frameworks should provide mechanisms for task prioritization, error handling under load, and monitoring resource utilization. Consider running scaled-up simulations or load tests with the target workload to reveal potential performance issues.

3. Support for Multi-Agent Coordination

Multi-agent coordination can dramatically increase agentic system effectiveness, allowing distributed problem-solving and division of labor. Evaluate whether a framework supports multiple agents working in parallel or collaborative arrangements. Look for features like role management, inter-agent communication protocols, and workflow orchestration for multi-agent tasks.

Frameworks designed for multi-agent scenarios often include mechanisms for conflict resolution, consensus-building, and shared memory architectures. These are essential for applications requiring agents to negotiate resources, jointly plan actions, or hand off tasks smoothly.

4. Security and Governance

Security and governance are paramount as agentic AI solutions interact with sensitive data and critical business systems. Assess whether a framework provides granular access control, audit logging, and policy enforcement tools. Effective governance features help ensure agents operate within defined ethical, compliance, and operational boundaries while protecting against misuse.

Consider also the framework’s support for monitoring, explainability, and traceability of agent actions. Strong governance tools are needed to track decision-making processes, investigate failures, and meet regulatory requirements.

5. Tool and API Integration

Finally, the ability to integrate with external tools, APIs, and data sources directly impacts the usefulness of an agentic AI framework. Check for support for standard connectors, plugin architectures, and customization points for integrating proprietary or third-party services. Frameworks with robust integration capabilities let agents leverage a broader set of tools for task completion, resulting in more capable, adaptable solutions.

Evaluate the simplicity of adding or updating tool integrations and the framework’s support for secure, well-documented APIs. Consider how agent workflows handle API errors, changes, or deprecations to ensure reliability in production environments.

Instaclustr and the Rise of Agentic AI Frameworks

Instaclustr, a trusted provider of fully managed open source data infrastructure, plays a pivotal role in enabling the seamless integration of agentic AI frameworks into modern business ecosystems. By offering managed services for technologies like Apache Cassandra, Apache Kafka, PostgreSQL, ClickHouse, OpenSearch and Cadence, Instaclustr provides the robust, scalable, and reliable data backbone required for advanced AI systems to operate effectively. These open source technologies are critical for handling the vast amounts of data that agentic AI frameworks rely on to learn, adapt, and make autonomous decisions.

Agentic AI frameworks are designed to create AI systems that act as independent agents, capable of perceiving their environment, learning from it, and making decisions to achieve specific objectives. These frameworks require a data infrastructure that can support real-time data ingestion, processing, and storage at scale. Instaclustr’s platform ensures that these requirements are met with enterprise-grade security, high availability, and 24/7 support, allowing businesses to focus on developing and deploying their AI solutions without worrying about the complexities of managing the underlying infrastructure.

The integration of managed open source services with Instaclustr with agentic AI frameworks unlocks new possibilities for innovation and automation. For example, an agentic AI system designed for supply chain optimization can leverage Instaclustr for Apache Kafka for real-time data streaming and Instaclustr for Apache Cassandra for scalable data storage. This enables the AI system to process live data from multiple sources, adapt to changing conditions, and make autonomous decisions to improve efficiency and reduce costs. By combining Instaclustr’s reliable data infrastructure with the adaptability of agentic AI frameworks, organizations can build intelligent systems that drive smarter decision-making and deliver transformative business outcomes.

For more information: