What are open source vector database solutions?

Open source vector database solutions are data management systems focused on storing, indexing, and searching high-dimensional vectors: numerical representations derived mostly from machine learning models. These systems handle the challenges of vector similarity search, such as finding the closest vectors to a given query within a large dataset, which is a core requirement for applications in artificial intelligence, search engines, recommendation systems, and natural language processing.

By being open source, these solutions offer transparent access to their source code, allowing organizations to audit, modify, and extend the database as needed. Unlike traditional relational or NoSQL databases, vector databases are optimized for operations on float arrays and feature sets that enable rapid nearest neighbor search at scale.

Their architectures typically accommodate the high dimensionality and performance demands of deep learning use cases. Open source options eliminate licensing costs while fostering a community-driven development model that accelerates innovation, provides extensibility, and encourages community support.

Core capabilities to expect in an open-source vector database solutions

Efficient similarity search

A primary feature of open-source vector databases is efficient similarity search, a process enabling rapid identification of the most similar vectors to a given input. This operation is fundamental for applications like image or document retrieval, semantic search, and recommendation engines.

Vector databases achieve this through specialized techniques such as approximate nearest neighbor (ANN) algorithms, which dramatically reduce search times compared to brute-force methods. ANN indexes, like HNSW or FAISS-based structures, allow scalable search even in datasets with billions of records and hundreds or thousands of dimensions.

Metadata filtering, faceting, and pre/post-filters

A vector database should incorporate metadata management for contextual queries. Metadata filtering lets users narrow search results using structured attributes such as tags, categories, timestamps, or any custom fields attached to the vectors. This capability enables, for example, filtering product recommendations by price range or user demographic.

Faceting and the use of pre/post-filters further refine search results by applying additional constraints before or after vector similarity evaluation. Faceting lets users group results into categories or ranges for quick analysis, similar to navigation in eCommerce or enterprise search.

Pre-filtering limits the vectors considered during similarity search to a qualifying subset, improving speed and relevance. Post-filters allow further refinement after candidate vectors have been retrieved, such as applying access controls or more complex business logic.

Real-time upserts, deletes, and time-to-live policies

Real-time upserts (atomically updating or inserting records_ allow for immediate refinements to vectors and their associated metadata without introducing downtime or complex migrations. This capability is especially important for applications like personalized recommendations or rapidly changing search corpora, where the underlying data is constantly updated.

Support for deletions and automatic time-to-live (TTL) policies ensures that expired, obsolete, or sensitive data doesn’t linger in the search index or database. This is also important for compliance, as regulations increasingly require timely data removal. Open-source vector databases with robust upsert, delete, and TTL functionality can maintain accurate datasets while simplifying adherence to data governance and privacy standards.

Multi-tenancy, namespaces, quotas, and RBAC

Enterprises often require vector database solutions to support multi-tenancy, enabling multiple users, departments, or applications to operate independently within the same physical infrastructure. Multi-tenancy prevents cross-tenant data leaks while maintaining logical and resource separation, crucial for SaaS systems or organizations running several AI models in parallel.

Namespaces provide further isolation at the logical data structure level, so teams can manage separate collections of vectors and metadata without risk of conflict or accidental data exposure. Resource quotas and role-based access control (RBAC) are equally important for large-scale or sensitive deployments. Quotas manage the allocation of compute, memory, or storage to prevent one tenant from monopolizing resources, while RBAC enforces permissions for different users or groups.

Observability hooks, tracing, and query explainability

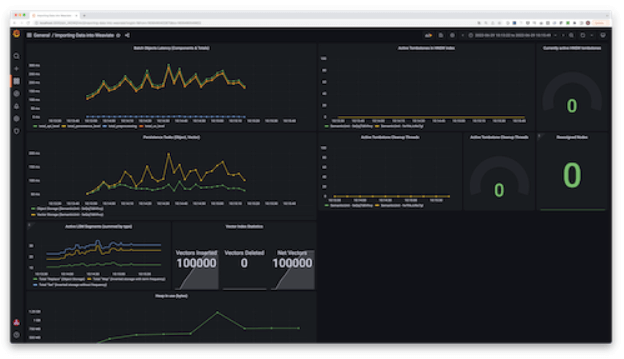

For production use, operational visibility into database internals is crucial. Observability hooks enable integration with monitoring systems, surfacing metrics such as query performance, index health, and resource utilization. This visibility enables early detection of slowdowns or resource bottlenecks, supporting proactive capacity planning and incident response.

Tracing capabilities provide end-to-end insight into individual query execution paths, helping teams diagnose latency issues or bugs and optimize application integrations. Query explainability is an emerging feature, allowing users to understand why particular results were returned for a given search request. It surfaces details on index selection, scoring algorithms, and filter applications.

Tips from the expert

Kassian Wren

Open Source Technology Evangelist

Kassian Wren is an Open Source Technology Evangelist specializing in OpenSearch. They are known for their expertise in developing and promoting open-source technologies, and have contributed significantly to the OpenSearch community through talks, events, and educational content

In my experience, here are tips that can help you better operate and choose open source vector database solutions in production:

- Treat embeddings like versioned data, not “just columns”: Store

embedding_model,model_version,prompt/template_version, andnormalizationas first-class fields; dual-write old+new embeddings during upgrades so you can A/B recall and roll back safely. - Build a “ground-truth harness” before you benchmark engines: Maintain a curated evaluation set and periodically compute exact neighbors on a sampled slice (even if it’s slow) to measure real

recall@kand “win-rate” against your current stack—otherwise ANN tuning becomes guesswork. - Design filters around selectivity, not convenience: High-selectivity filters should be applied early; low-selectivity filters often perform better after ANN retrieval. Precompute “filter buckets” (e.g.,

tenant_id,region,doc_type) to avoid expensive wide scans. Plan for vector drift as an operational metric:Monitor embedding distribution shift (norms, cosine similarity histograms, centroid movement) and retrieval stability over time; drift is often the hidden cause of “search got worse” incidents.Make index rebuild a blue/green deployment, not a maintenance event:For large collections, rebuild in parallel (new index/collection), shadow traffic for quality + latency, then cut over. It’s the cleanest path for changing ANN parameters, quantization settings, or schema.

Notable open source vector database solutions

1. Opensearch

OpenSearch is a search and analytics engine that now includes a vector database capability through its Vector Engine. It enables similarity search on unstructured data like text, images, and audio by storing and indexing embeddings generated from machine learning models.

Key features include:

- Optimized vector engine: Supports high-dimensional vector storage and similarity search using k-nearest neighbors for semantic and contextual querying

- Hybrid and multimodal search: Enables combined vector and keyword (sparse) search, as well as multimodal search using a single platform

- Real-time data ingestion and indexing: Continuously collects and indexes data with support for data transformation and enrichment during ingestion

- GPU-accelerated search: Leverages GPU hardware to scale vector indexing and search performance across large datasets

- Native AI integration: Works with large language models and AI pipelines for semantic search, recommendation systems, and RAG use cases

- Unified query and analytics platform: Combines search, analytics, observability, and security analytics in one extensible engine

2. Apache Cassandra

Apache Cassandra introduced vector search in version 5.0 to support AI and machine learning workloads. By enabling storage and similarity search over embeddings within its high-availability infrastructure, Cassandra brings vector capabilities to a scalable, distributed database system. It uses Storage Attached Indexing (SAI) to support retrieval of semantically similar items.

Key features include:

- Native vector data type in CQL: Adds first-class support for storing embeddings as high-dimensional float arrays directly in the Cassandra schema

- Semantic similarity search: Enables contextual querying using vector embeddings for tasks like recommendation, classification, and retrieval

- Storage Attached Indexing (SAI): Uses column-level indexes for efficient, high-throughput similarity search on vector data

- LLM-friendly embeddings: Supports embeddings generated from large language models for natural language processing and other AI applications

- Extensible indexing architecture: Validates the modularity of SAI, allowing future expansion of search capabilities on vector and structured data

3. pgvector

pgvector is an open-source extension that adds vector similarity search capabilities directly to PostgreSQL. It enables developers to store, index, and query vectors alongside structured data using the familiar SQL interface. Designed to support both exact and approximate nearest neighbor search, pgvector works with PostgreSQL features like ACID compliance, JOINs, and point-in-time recovery.

Key features include:

- Native PostgreSQL integration: Add vector search to existing PostgreSQL tables and queries without needing a separate database

- Multiple distance metrics supported: Choose from L2, cosine, inner product, L1, Hamming, and Jaccard distances for similarity queries

- Exact and approximate search: Supports both brute-force (exact) and approximate search using HNSW or IVFFlat indexes

- Flexible indexing: Fine-tune HNSW and IVFFlat parameters for recall, speed, and memory usage; supports up to 64,000 dimensions depending on vector type

- Rich querying capabilities: Use standard SQL to find nearest neighbors, filter by distance thresholds, compute similarities, and perform group-wise aggregations

4. Milvus

Milvus is an open-source vector database to support large-scale, AI-driven applications. It allows developers to store, index, and query collections of embeddings with low latency. Its architecture supports both lightweight prototyping on a laptop and distributed production systems operating at billions of vectors.

Key features include:

- Multiple deployment modes: Choose from Milvus Lite (runs in notebooks via pip), Milvus Standalone (single-node), or Milvus Distributed (scales horizontally to billions of vectors)

- High-speed vector search: Supports efficient similarity search at scale, using optimized indexing for low-latency queries even on large datasets

- Rich search capabilities: Enables hybrid search, combining vector similarity with metadata filters for more refined results

- AI tool integration: Works with LangChain, LlamaIndex, Hugging Face, OpenAI, and others to accelerate GenAI development

- Feature-rich API: Provides operations like collection creation, insertions, deletions, and queries via a simple client interface

5. Qdrant

Qdrant is an open-source vector database purpose-built for fast and scalable similarity search on large-scale vector data. It focuses on delivering low-latency queries, high throughput, and precise results through a combination of custom HNSW optimizations and compression techniques.

Key features include:

-

- High-speed performance: Supports more requests per second (RPS) than many alternatives, with optimized indexing and low-latency ANN search

- Compression techniques: Supports scalar, product, and binary quantization to reduce memory usage and accelerate search, enabling 40× performance gains in some cases

- Flexible deployment options: Run in fully managed Qdrant Cloud, hybrid cloud, or private cloud environments with support for AWS, GCP, and Azure

- Filtering and payloads: Rich filtering capabilities on vector metadata, supporting strings, numerical ranges, geo-locations, and other payload types

- Enterprise-grade security and reliability: Includes access management, backup and disaster recovery, and enterprise support options

Source: QDrant

6. Weaviate

Weaviate is an open-source vector database to simplify the development of AI-powered applications. It combines vector search with traditional keyword search, supports integration with popular machine learning models, and offers a flexible architecture for scaling from prototypes to production.

Key features include:

- Hybrid search built-in: Combines vector search and BM25 keyword search in a single engine, enabling semantic and lexical matching without extra configuration

- Plug-and-play ML integrations: Connects to over 20 machine learning models and frameworks, making it easy to vectorize data or bring your own embeddings

- Native RAG support: Securely integrate proprietary data with language models to power retrieval-augmented generation use cases out-of-the-box

- Filtering at scale: Supports fast, complex queries over large datasets, including filtering on metadata and nested fields

- Flexible deployment options: Run Weaviate self-hosted, in a managed service, or deploy it via Kubernetes in your own cloud environment

Source: Weaviate

7. Vald

Vald is a cloud-native, distributed vector search engine optimized for fast and scalable approximate nearest neighbor (ANN) search. It uses the NGT algorithm to enable high-speed similarity search across datasets, supporting billions of high-dimensional vectors. Designed with Kubernetes in mind, Vald provides horizontal scalability and fault tolerance.

Key features include:

- Scalable cloud-native architecture: Designed for Kubernetes, supports horizontal scaling across memory and CPU for high-throughput environments

- Fast ANN search with NGT: Uses the NGT algorithm for fast and accurate approximate nearest neighbor search across dense vector data

- Asynchronous automatic indexing: Performs background indexing without blocking search operations, avoiding downtime during graph updates

- Distributed and replicated indexing: Distributes vector indexes across multiple agents with automatic replication and self-healing in case of node failures

- Customizable ingress/egress filtering: Supports flexible gRPC-based filters at data input and output stages for fine-grained control over vector processing

Related content: Read our guide to vector database open source

Choosing an open source vector database solution

Selecting the right open source vector database depends on the needs of your application, infrastructure, and operational environment. While all solutions covered offer core capabilities such as vector search, metadata filtering, and real-time updates, the trade-offs in performance, scalability, integration, and deployment flexibility can vary widely. Here are the key factors to consider when evaluating and choosing a vector database:

- Search performance and indexing strategy: Consider the speed and accuracy of similarity search across different indexing methods. Evaluate whether the database supports ANN techniques like HNSW or IVFFlat and how tunable they are for your recall/latency trade-offs.

- Scalability and deployment model: Assess how well the solution scales with your data size and query volume. Determine if the database offers support for distributed operation, cloud-native deployments (e.g., Kubernetes), or lightweight local modes for development.

- Integration with AI workflows: Look for native support or compatibility with your ML/AI stack, including vectorizers, model inference pipelines, and RAG architectures. Integration with frameworks like LangChain, Hugging Face, or LlamaIndex may be critical for GenAI use cases.

- Filtering and query flexibility: Evaluate the expressiveness of query capabilities, including support for metadata filtering, hybrid search (vector + keyword), and logical operators. This is especially important for applications requiring precise control over search results.

- Operational features and observability: Production readiness depends on observability, upsert/delete support, TTL policies, and ease of monitoring. Look for built-in metrics, tracing tools, and support for automated index maintenance and backups.

- Security, multi-tenancy, and access control: Consider enterprise features such as RBAC, tenant isolation, quota management, and integration with identity providers. These are essential in multi-user environments and regulated industries.

- Community, ecosystem, and maturity: An active open source community, detailed documentation, and a growing ecosystem of clients and plugins can accelerate development and provide long-term support. Maturity often correlates with reliability and stability in production.

By aligning these factors with your workload characteristics (such as data dimensionality, update frequency, and latency tolerance) you can choose a vector database that meets your performance goals and operational constraints.

Conclusion

Open source vector databases have matured into essential infrastructure for AI-driven systems, offering specialized indexing, low-latency search, and flexible integration with machine learning pipelines. The top solutions demonstrate that open source tools can match or exceed proprietary offerings in performance, scalability, and extensibility. Choosing the right vector database requires careful evaluation of workload patterns, data architecture, and operational needs.