What Is Kafka as a service?

Kafka as a Service (KaaS) refers to the practice of using a managed Apache Kafka platform provided by a third-party vendor, rather than self-managing a Kafka cluster. This approach allows users to leverage the benefits of Kafka’s distributed streaming capabilities without the complexities of infrastructure setup, scaling, and maintenance. The vendor handles the underlying infrastructure, while users focus on building and running their applications that utilize Kafka for data streaming.

Providers offer access to fully managed Kafka through APIs or web-based dashboards, abstracting away the cluster management layer. By handling the heavy lifting, Kafka as a Service gives organizations faster time-to-value for event streaming projects. The provider ensures reliable performance, handles scaling, automates backups, and provides patches for vulnerabilities.

Customers pay for what they use and can rely on consistent SLAs around uptime, data retention, and throughput. As a result, teams can rapidly integrate real-time data pipelines into their products, analytics, and business processes, benefiting from Kafka’s reliability and scalability without the intensive overhead.

Why organizations choose managed Kafka over self-hosted

Running Kafka in-house requires deep expertise in distributed systems and significant resources to ensure stability, performance, and security. Self-hosting means that internal teams are responsible for provisioning hardware, managing operating systems, configuring clusters, upgrading software, scaling infrastructure, and responding to incidents.

This operational overhead can distract from a team’s core business objectives, slow innovation, and introduce risk if not managed effectively. Keeping pace with Kafka’s rapid evolution, tuning clusters for performance, and ensuring regulatory compliance make it even more challenging.

Managed Kafka services eliminate much of this burden, shifting operational responsibility to experts who maintain the underlying infrastructure day and night. Organizations benefit from rapid provisioning, hands-off scaling, and included monitoring. Critical incident response and data protection are handled by the service provider, often with higher reliability than in-house operations can guarantee.

Related content: Read our guide to Kafka architecture

Core features of a fully managed Kafka service

Serverless, elastic scaling

A standout feature of managed Kafka services is serverless, elastic scaling. This allows users to adjust cluster resources automatically and on demand without manual intervention. As workload increases or decreases, the service dynamically provisions or decommissions brokers, partitions, and storage to match throughput and retention needs.

This ensures efficient resource usage and cost control, preventing over-provisioning during slow periods and removing the risk of bottlenecking during data spikes. The scalability is transparent to the user, managed entirely by the service provider behind the scenes. Teams no longer need to estimate future load or pre-scale clusters, as the platform adapts to workload changes.

Automated provisioning, configuration and maintenance

Managed Kafka solutions automate the provisioning of new clusters, reducing setup time from days or weeks to minutes. Users can deploy clusters through simple user interfaces or APIs with a few configuration choices such as region and throughput requirements. This eliminates the need to manually select hardware, configure network settings, or tune Kafka parameters.

Service providers pre-apply best practices and recommendations for optimal performance and reliability. Providers also handle ongoing maintenance tasks including patching for security vulnerabilities, applying updates, and routine health checks. Regular maintenance windows are coordinated to minimize disruption, and rolling upgrades are performed to keep systems current with minimal downtime.

High availability and fault tolerance

Providers architect their platforms to minimize single points of failure through redundant networking, storage, and geographically distributed clusters. Data replication across multiple brokers and even across availability zones or regions is made seamless, ensuring that applications stay online even in the event of hardware, network, or power failures. Automatic failover mechanisms reroute traffic and recover data streams quickly.

Fault tolerance is maintained through consistent monitoring and automatic recovery features. If a node or broker fails, managed services quickly rebalance partitions, restore state, and redistribute workload. These resilience features are backed by service-level agreements (SLAs) for uptime and durability.

Security and access control

Managed Kafka providers offer built-in security capabilities to protect data as it moves through streaming pipelines. This includes encryption at rest and in transit, preventing unauthorized interception or tampering. Providers integrate with identity and access management systems, enabling fine-grained access controls based on roles or organizational policies. This ensures that only authorized users and applications can produce, consume, or administer topics.

Security best practices are enforced by default, including regular vulnerability scanning, network isolation, and logging of access attempts. Many providers also offer compliance certifications for standards such as GDPR, HIPAA, or SOC 2, making it easier for organizations to meet regulatory obligations. Centralized access control simplifies auditing and governance.

Observability and monitoring

Managed Kafka services offer integrated dashboards, metrics, and automated alerts to monitor cluster health, throughput, latency, and resource utilization. This visibility helps teams identify anomalies, detect bottlenecks, and maintain optimal performance. Metrics can be exported to third-party monitoring platforms for deeper analysis and incident response workflows.

Providers often include pre-configured alerts for lagging consumers, storage growth, or degraded nodes. Real-time monitoring reduces the mean time to detect and fix potential outages. Combined with detailed logging and tracing, observability features empower organizations to troubleshoot issues quickly. Efficient monitoring supports better capacity planning and ensures a reliable streaming environment.

Notable Kafkla as a service solutions

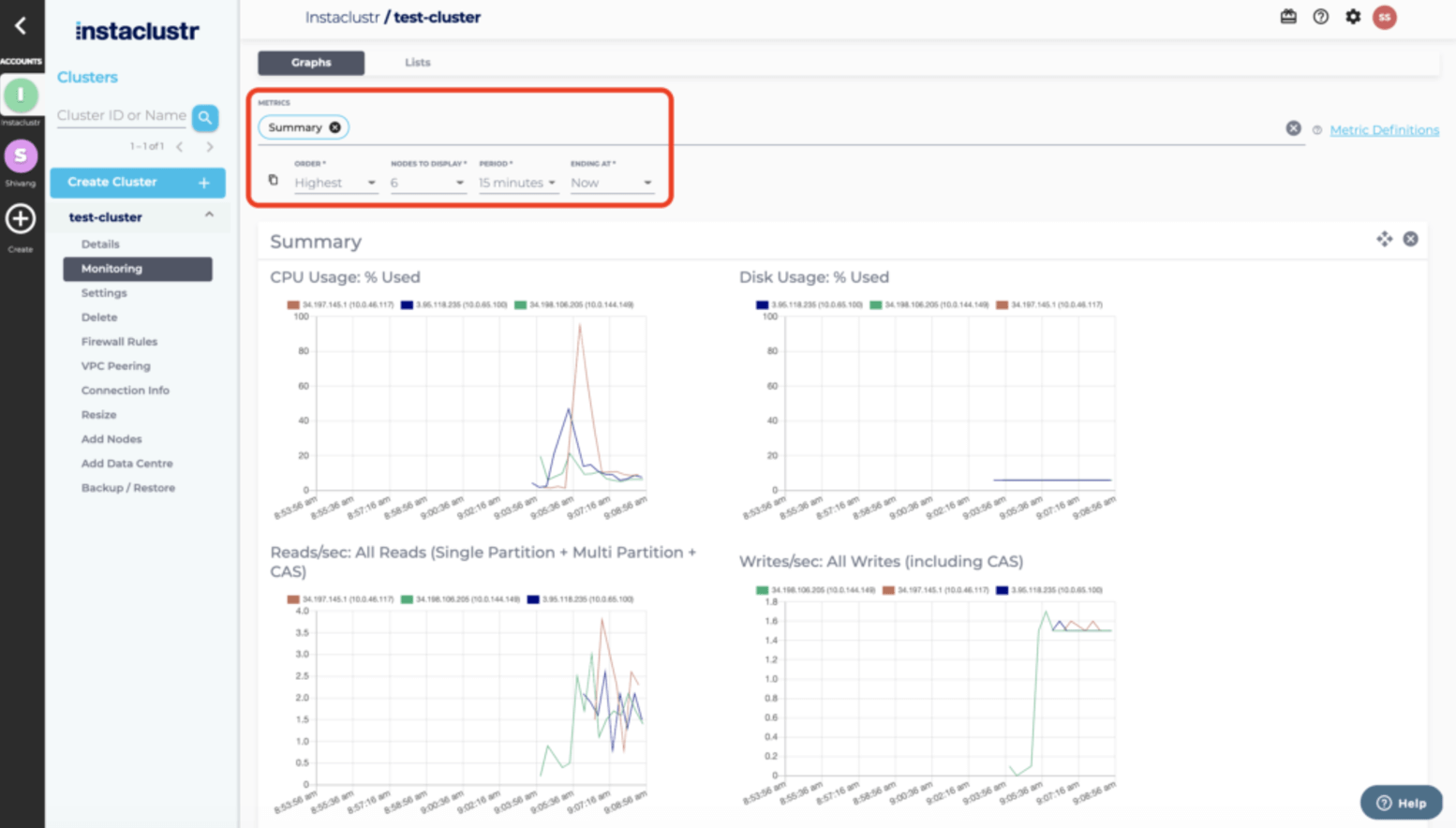

1. NetApp Instaclustr

Instaclustr for Kafka is a fully managed Apache Kafka streams data in real time without the headaches of operating complex infrastructure. Built for reliability, it delivers high availability, automated failover, and proactive monitoring so data pipelines stay up and running. It scales seamlessly as throughput and topics grow, providing consistent performance from pilot to production. And with expert support, streamlined provisioning, and built-in best practices, Instaclustr makes Kafka easier to adopt and operate—so organizations can focus on building real-time applications, not managing clusters.

- High availability and automated failover — Keeps streams running during outages so you avoid downtime and lost messages.

- Seamless horizontal scalability — Adds brokers and storage on demand to handle traffic spikes without re-architecting.

- End-to-end security — Encrypts data in transit and at rest, with role-based access control and private networking to protect sensitive workloads.

- Proactive monitoring and alerting — Tracks broker health, lag, and throughput with actionable alerts so issues can be fixed before they impact users.

- Managed upgrades and patching — Applies tested Kafka and OS updates, reducing risk and freeing teams up from maintenance windows.

- Performance tuning out of the box — Uses proven configurations for partitions, replication, and retention to deliver consistent low latency.

- Self-service provisioning — Launches clusters in minutes with guided defaults, cutting setup time and complexity.

- Expert 24/7 support — Access to Kafka specialists for architecture reviews and incident response to keep data pipelines healthy.

- Multi-cloud and region options — Deploy where needed to meet latency, data residency, and cost goals.

- Compliance-ready operations — Built-in controls and auditability to help meet industry standards and internal policies.

Source: NetApp Instaclustr

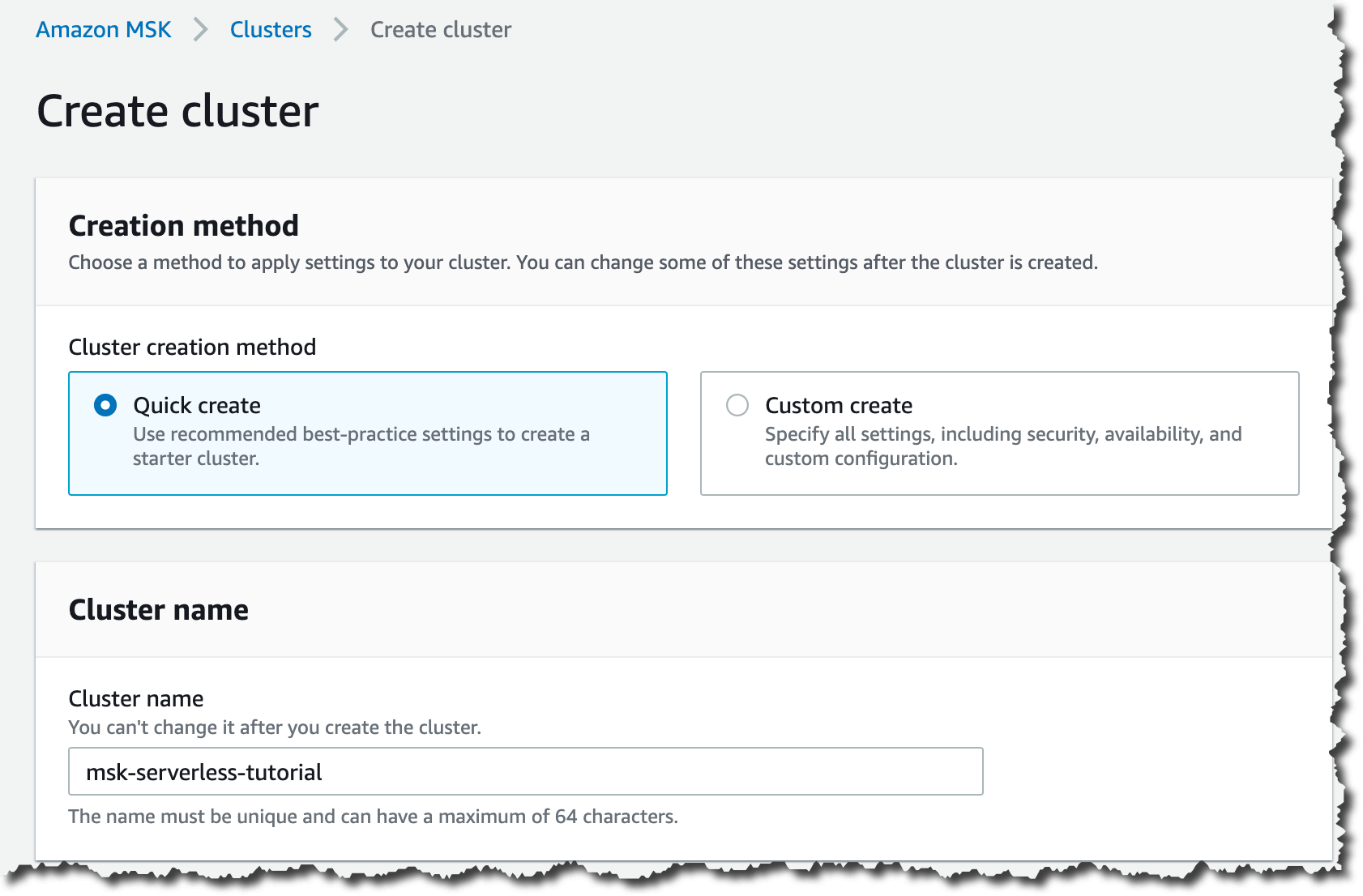

2. Amazon Managed Streaming for Apache Kafka (MSK)

Amazon MSK is a fully managed service that handles the infrastructure, scaling, and maintenance of Apache Kafka clusters on AWS. It enables teams to run Kafka applications and Kafka Connect connectors without needing deep operational expertise. The platform integrates with AWS services, supports enterprise-grade security, and provides multi-AZ deployment.

Key features include:

- Pay-as-you-go pricing: Cost-efficient model with low price-to-performance ratio for Kafka workloads.

- Resiliency and availability: Multi-AZ deployments with automated detection, mitigation, and recovery of failures.

- Operational simplicity: Managed provisioning, configuration, and maintenance of Kafka and Kafka Connect clusters.

- AWS integrations: No-code or managed connectors to source and deliver data across AWS services.

- Flexible use cases: Supports log/event ingestion, centralized data buses, and real-time event-driven applications.

Source: Amazon

3. Google Cloud – Managed Service for Apache Kafka

Google Cloud’s Managed Service for Apache Kafka offers a managed, highly available Kafka platform that removes the complexity of operating brokers, managing storage, and performing upgrades. It runs open source Apache Kafka and Kafka Connect, ensuring compatibility with existing applications while adding integrations with Google Cloud’s IAM, monitoring, logging, and networking tools.

Key features include:

- Kafka Connect data integration: Can migrate or replicate Kafka clusters and stream data into BigQuery or Google Cloud Storage.

- Open source compatibility: Runs standard Apache Kafka and Kafka Connect code, with schema registry API support (Preview).

- Operational simplicity: Automated broker sizing, rebalancing, version updates, and built-in Cloud Monitoring and Logging.

- High availability by default: All deployments designed to be fault-tolerant with multi-zone architecture.

- Security integration: Supports Google Cloud IAM, customer-managed encryption keys (CMEK), and Virtual Private Cloud (VPC) networking.

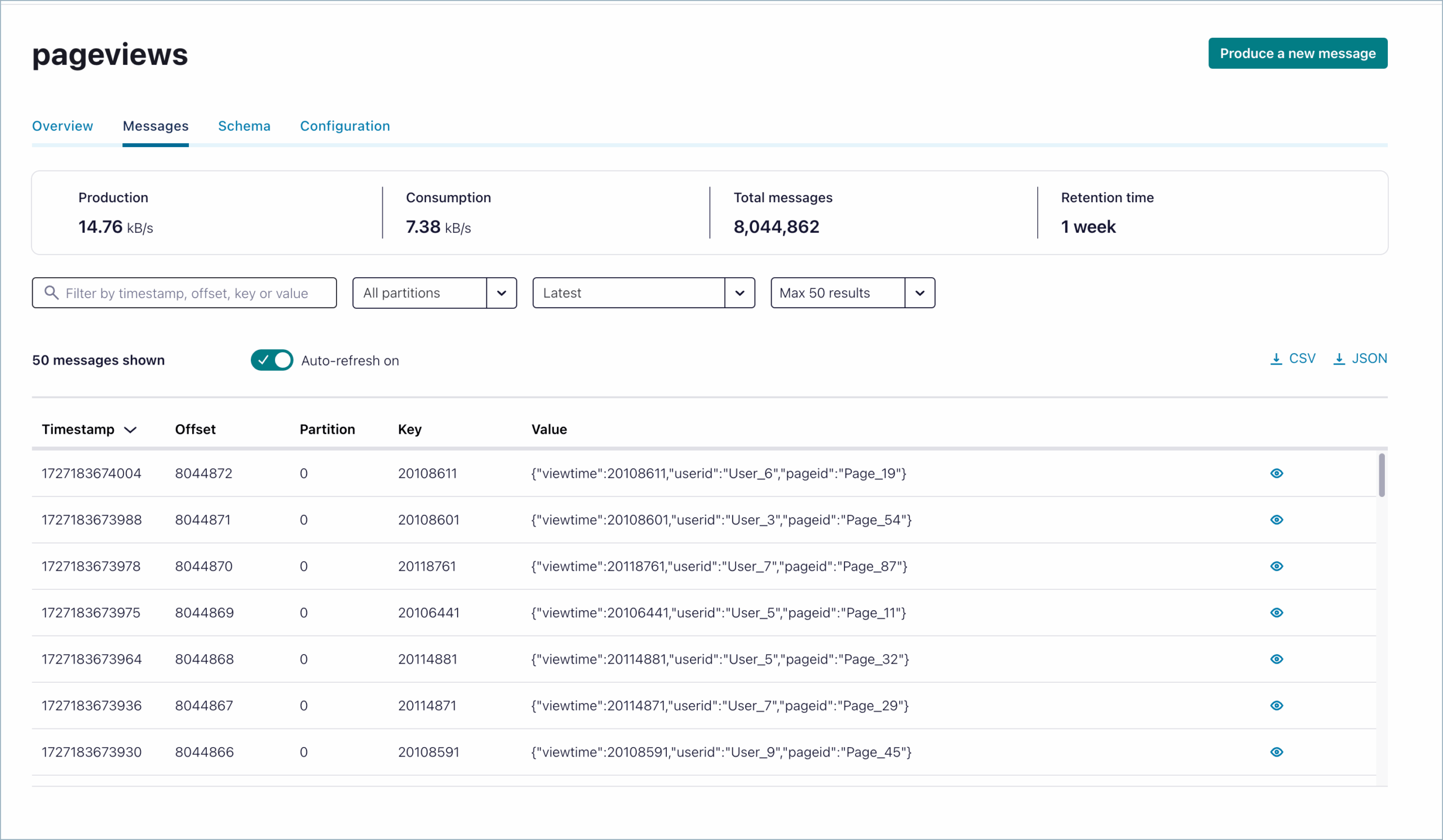

4. Confluent Cloud

Confluent Cloud is a fully managed, serverless Apache Kafka service designed to run across major public clouds. Supported by Confluent’s Kora engine, it delivers autoscaling to match workload demands, eliminating the need to over-provision resources and reducing infrastructure costs by up to 60% compared to self-managed Kafka.

Key features include:

- Elastic autoscaling with Kora: Dynamically adjusts cluster capacity to workload needs, cutting infrastructure costs and preventing resource waste.

- Cluster options: Basic, Standard, Enterprise, and Freight clusters optimized for development, production, or high-volume workloads.

- Multi-region & multicloud support: Uses Cluster Linking to replicate and share data across regions, clouds, or organizations.

- Enterprise-grade security: RBAC, encryption (self-managed keys, client-side field-level), audit logs, and regulatory compliance readiness.

- Managed connectors: Offers over 120 pre-built and 80 managed connectors for integrating with databases, data lakes, warehouses, and SaaS tools.

Source: Confluent

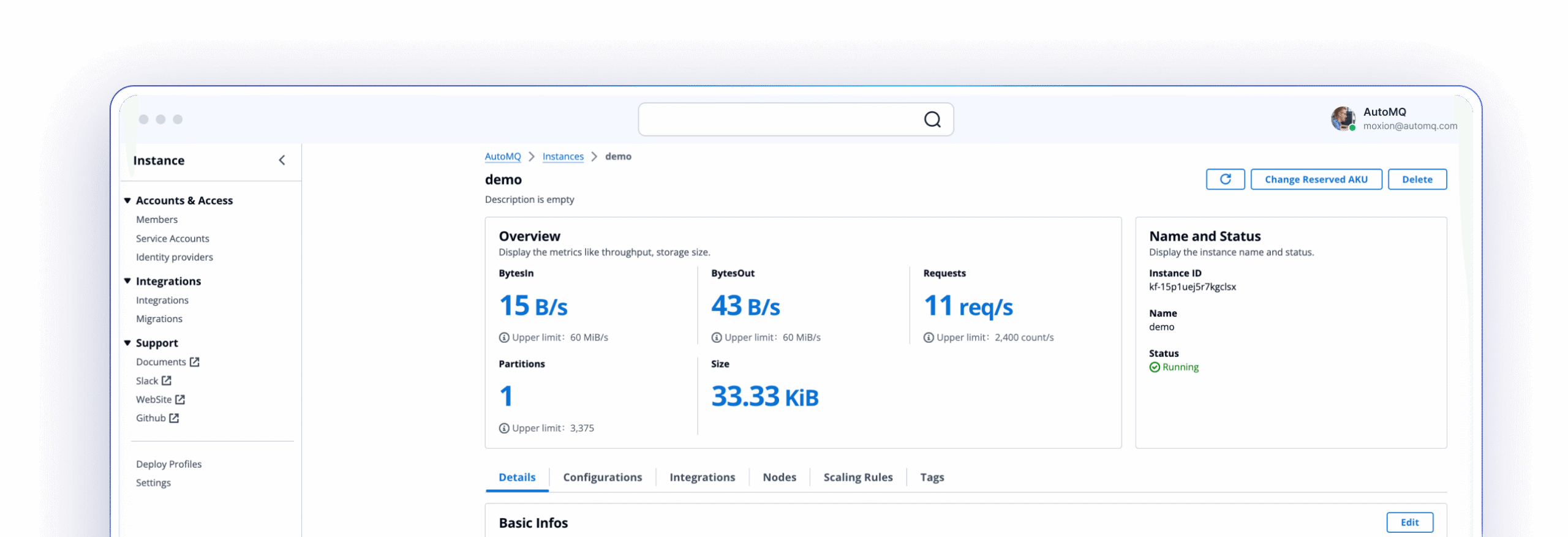

5. AutoMQ

AutoMQ is a cloud-native, Kafka-compatible streaming data platform that emphasizes efficiency, scalability, and cost optimization. Built on a compute-storage separation model with data stored on S3, brokers are stateless, enabling near-instant scaling and reducing operational complexity.

Key features include:

- Kafka compatibility: Compatible with Apache Kafka APIs and ecosystem tools.

- Cloud-first architecture: Compute-storage separation with all data stored on S3, enabling stateless brokers and seamless scaling.

- Cost optimization: Reduces storage costs by up to 90%, cuts idle capacity expenses, and helps avoid cross-AZ traffic fees.

- Built-in connectors: Over 300 Kafka connectors for rapid integration with databases, SaaS apps, and analytics systems.

- Iceberg-ready streaming: Directly streams data into Iceberg tables for real-time analytics and lakehouse workflows.

Source: AutoMQ

Conclusion

Adopting Kafka as a Service allows organizations to focus on delivering streaming-driven applications instead of managing the complexities of distributed messaging infrastructure. With built-in scalability, fault tolerance, security, and observability, these platforms simplify operations while ensuring high performance and reliability. This approach reduces operational risk, accelerates time to production, and enables teams to adapt quickly to evolving data demands.