What are enterprise AI search platforms?

Enterprise AI Search platforms use artificial intelligence to unify and search across all company data (docs, chats, systems) for fast, personalized answers, moving beyond keyword search to understand context, users, and intent. Top players include Instaclustr, Guru, and Kore.ai.

Core features of AI enterprise search include:

- Unified search: Single interface for data across multiple sources (SharePoint, Salesforce, Slack, etc.).

- Generative AI and RAG: Uses LLMs (like Gemini) to provide direct answers, summaries, and insights grounded in your documents (Retrieval-Augmented Generation).

- Personalization: Delivers results based on user roles, context, and past behavior.

- Connectors: Pre-built integrations to link to CRMs, ERPs, cloud storage, and communication tools.

- AI agents: Autonomous agents for complex tasks, research, or automated workflows.

- Security: Maintains enterprise security, ensuring users only see authorized content.

This is part of a series of articles about vector database

Core features of AI enterprise search platforms

Unified search

Unified search is a foundational feature that allows users to query data across multiple sources, including emails, file systems, intranets, cloud apps, and databases, from a single search bar. Instead of searching each platform individually, users receive consolidated results ranked by relevance, regardless of where the data physically resides.

This helps save time and ensures that valuable information isn’t missed simply because it’s stored in a less obvious location. For enterprises with teams that use a suite of business tools, unified search addresses fragmentation and inefficiency. It does this by normalizing varied data types and presenting results in an accessible and cohesive manner. This aggregation typically involves indexing, data mapping, and sometimes metadata enrichment.

Generative AI and RAG

Generative AI refers to systems that can produce content such as answers, summaries, or explanations using trained large language models (LLMs). In enterprise search platforms, integrating generative AI allows users to get synthesized and contextualized responses to queries, not just a list of documents or files that might be relevant.

Retrieval-augmented generation (RAG) enhances this by blending search and generative capabilities: facts are retrieved from trusted enterprise data and then composed into coherent, up-to-date answers by the AI. RAG bridges the gap between generative AI creativity and strict enterprise data accuracy requirements.

Personalization

Enterprise AI search platforms increasingly support personalization by analyzing individual user behavior, preferences, and contextual signals. These systems learn from user interactions such as frequently accessed documents, applied filters, and implicit feedback to tailor search rankings and recommendations for each person. As a result, employees receive content that is more relevant to their role, team, or recent activities, improving productivity and satisfaction.

Personalization is particularly important in large organizations where employees have diverse functions and information needs. It helps prevent information overload and ensures that the data surfaced by the search does not just reflect general relevance but also current work context or past actions.

Connectors

Connectors are critical components enabling enterprise AI search platforms to interface with a wide variety of data sources. They extract, transform, and load data from third-party applications, file stores, cloud services, and databases into the search index. Well-designed connectors preserve the structure, metadata, and access controls of the original content, ensuring seamless integration without compromising security or compliance.

A robust ecosystem of connectors allows an enterprise search platform to be flexible and extensible, adapting to changing business application landscapes. Modern platforms often ship with out-of-the-box connectors for popular services like Microsoft 365, Salesforce, Slack, or Google Workspace and offer APIs or SDKs for custom integration.

AI agents

AI agents are automated components within enterprise search platforms that can execute tasks, answer questions, or guide users through information-rich workflows. Leveraging natural language understanding and scripted logic, these agents can help users refine queries, surface contextual answers, or suggest next steps, often without human intervention.

Some advanced platforms allow for conversational interaction with AI agents, supporting dynamic Q&A, process automation, and decision support. By adopting AI agents, organizations can extend the reach of enterprise search beyond simple document retrieval. For example, agents can automate common support tasks, onboard users, or proactively offer insights based on detected trends or user needs.

Security

Security is a non-negotiable pillar in enterprise AI search platforms. These platforms must rigorously enforce the underlying permissions and access controls of all connected data sources. Role-based access, single sign-on (SSO), multi-factor authentication (MFA), and fine-grained permissions ensure that sensitive information is only visible to authorized users.

The platform often uses encryption for data in transit and at rest, as well as comprehensive audit logs for monitoring and compliance. Enterprise customers expect platforms to support their regulatory requirements, including GDPR, HIPAA, SOC 2, and others, depending on the industry.

How enterprise AI search platforms work

Enterprise AI search platforms operate through a multi-layered architecture that combines data integration, indexing, semantic understanding, and response generation. The core process begins with ingesting data from multiple sources using connectors, which extract content, metadata, and access controls. This content is normalized and indexed, enabling efficient retrieval across structured and unstructured formats.

Once indexed, the platform applies AI models, typically combining traditional search algorithms with vector-based semantic retrieval. Natural language processing is used to parse user queries, identify intent, and match queries with the most relevant documents or answers. Advanced platforms use large language models (LLMs) to understand context and generate summaries or direct responses.

Retrieval-augmented generation (RAG) enhances this process by retrieving relevant documents and passing them to a generative AI model, which synthesizes a coherent, context-aware response. Throughout, access controls and security policies are enforced at every layer to ensure that users only see content they are authorized to access.

The system continuously learns from user behavior and feedback, improving ranking, personalization, and result quality over time. Some platforms also support real-time data querying via APIs or plugins, ensuring users always access the freshest and most relevant information.

Tips from the expert

David vonThenen

Senior AI/ML Engineer

As an AI/ML engineer and developer advocate, David lives at the intersection of real-world engineering and developer empowerment. He thrives on translating advanced AI concepts into reliable, production-grade systems all while contributing to the open source community and inspiring peers at global tech conferences.

In my experience, here are tips that can help you better leverage enterprise AI search platforms:

- Inject enterprise-specific ontologies into vector search: Augment semantic search accuracy by integrating domain-specific ontologies (e.g., legal, pharma, finance) into vector embeddings. This sharpens concept matching and helps LLMs understand enterprise lingo more deeply.

- Deploy feedback-aware ranking pipelines: Build custom feedback loops where implicit signals (clicks, skips, time-on-result) influence future ranking. Over time, this tailors retrieval precision uniquely to the organization’s search behavior patterns.

- Integrate knowledge decay mechanisms: Implement logic that weights fresher content higher in search rankings or flags stale documents using time-based relevance decay. This ensures outdated or obsolete information doesn’t dominate results.

- Enable hybrid retrieval with dynamic source weighting: Combine lexical, semantic, and API-based real-time retrieval in one pipeline, and dynamically tune source weights based on query type (e.g., factual queries vs. process lookups).

- Use session-based personalization over static profiles: Instead of only relying on user roles, personalize based on live session context—what the user is doing now, recent searches, and query refinements. This delivers intent-aware results that adapt in real time.

Notable enterprise AI search platforms

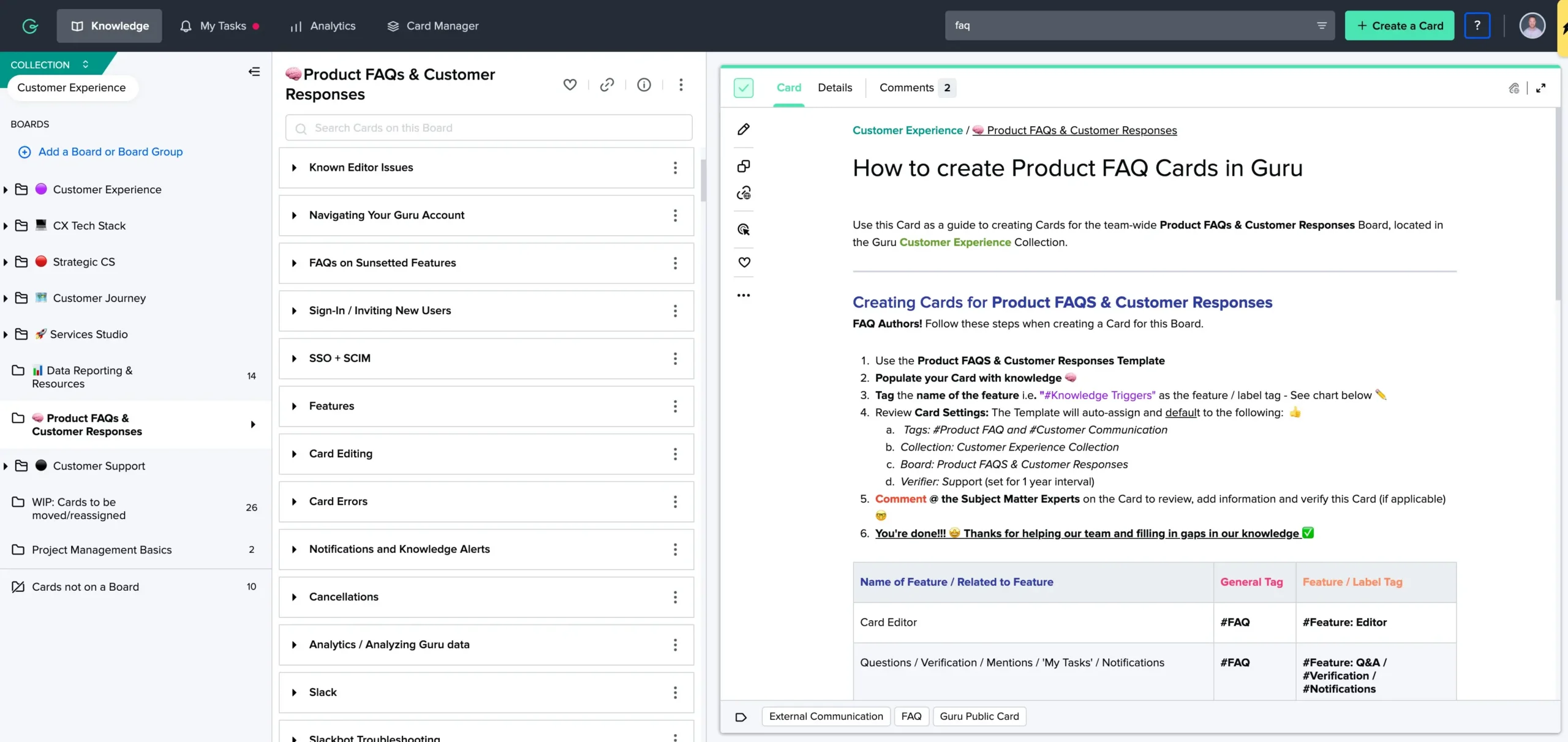

1. Guru

Guru is an enterprise AI search platform focused on delivering verified, permission-aware knowledge within an organization’s workflow. It enables teams to build AI-powered “Knowledge Agents” that provide trusted answers, chat responses, and guided research grounded in internal company data.

Key features include:

- Knowledge agents: AI-powered assistants that answer questions, chat with users, and guide research using verified company data

- Agentic search: Goes beyond document retrieval by reasoning over content to generate contextual responses

- Permission-aware responses: AI answers respect real-time permissions, ensuring data security and access compliance

- Slack and Teams integration: Delivers answers and announcements in communication platforms

- Research mode: Turns complex queries into structured research plans with citations and source references

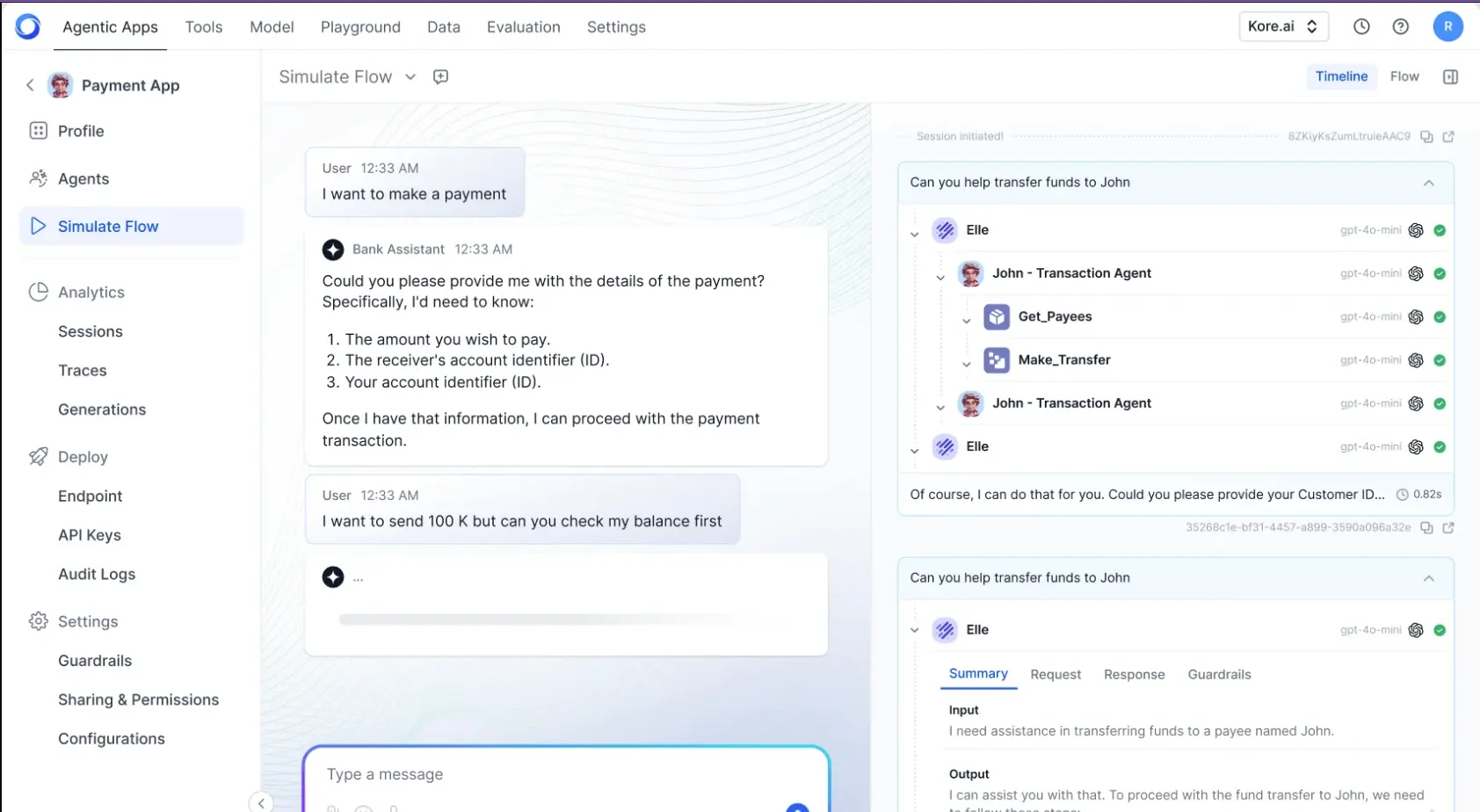

2. Kore.ai

Kore.ai is an enterprise AI platform to simplify work by embedding AI agents into operations across departments, systems, and tools. Its “AI for Work” solution unifies search, automation, and orchestration to reduce bottlenecks and improve efficiency. Unlike legacy systems retrofitting AI, Kore.ai is built natively with agentic architecture.

Key features include:

- Enterprise search: Uses agentic retrieval-augmented generation (RAG) to surface permission-aware answers from both structured and unstructured data sources

- Pre-built AI agents: Access a marketplace of over 200 agents and 9,000 enterprise actions to automate tasks across HR, sales, IT, and finance

- No-code to pro-code agent builder: Allows both employees and admins to build and deploy custom AI agents and workflows using visual tools or configurations

- Orchestration engine: Coordinates multi-agent workflows with task routing, context sharing, and extensibility across enterprise processes

- Customizable search pipeline: Supports configuration of extraction, enrichment, and retrieval strategies to improve search accuracy

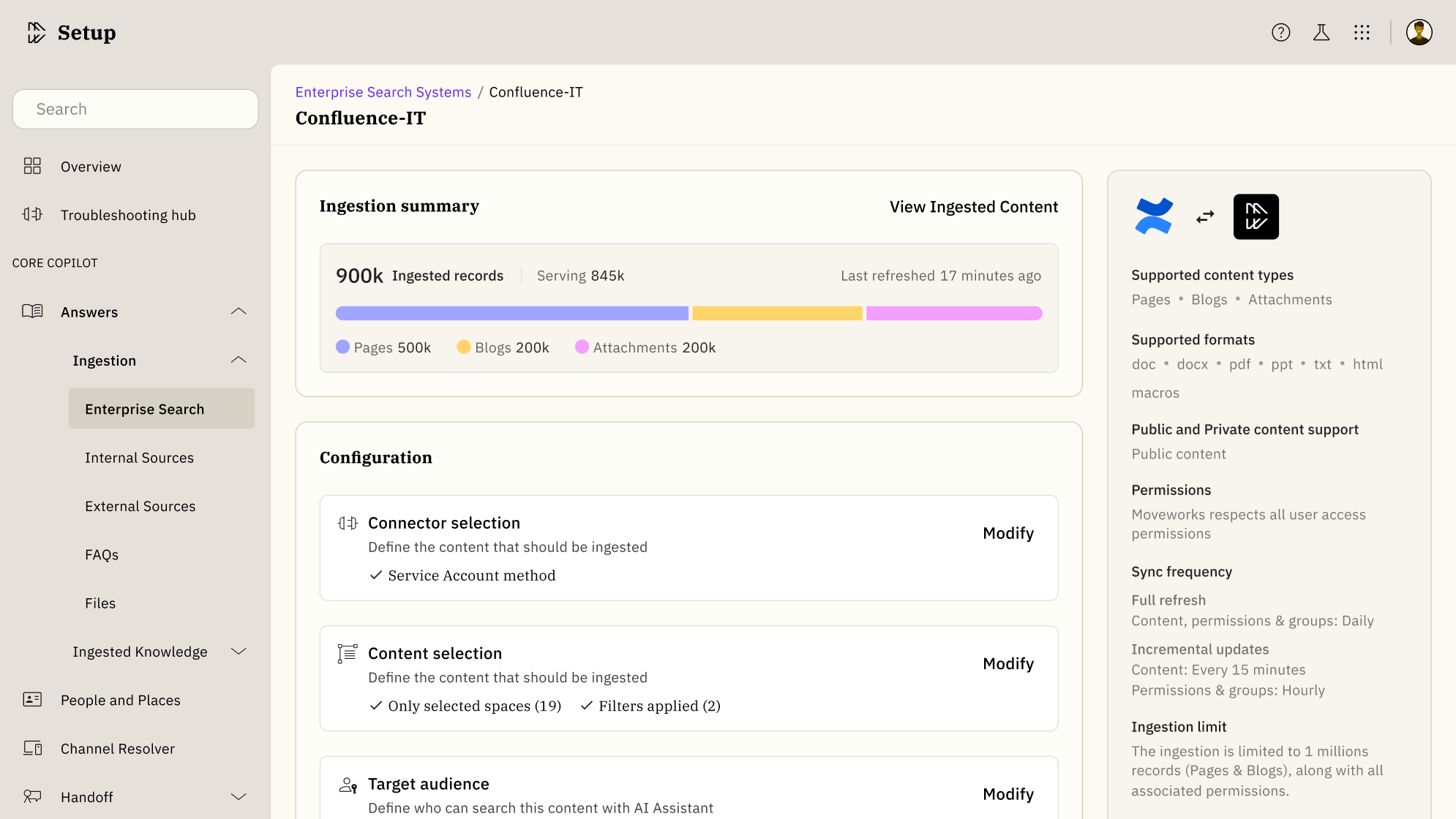

3. Moveworks Enterprise Search

Moveworks Enterprise Search is a context-aware, solution to unify fragmented enterprise knowledge and deliver precise answers. It interprets user intent using organizational context like internal terminology, access permissions, location, and roles to provide relevant responses with grounded AI summaries and inline source citations.

Key features include:

- Contextual AI answers: Understands internal language, relationships, and user intent to deliver accurate responses with AI summaries and inline citations

- Integrated knowledge access: Connects over 50 enterprise systems using both indexed and live API search for unified discovery across apps

- Granular search controls: Offers filters by source, date, and owner, along with vetted, grouped results to simplify navigation and ensure relevance

- Multilingual search (MLS): Enables effective search across multiple languages to support global teams

- Dynamic access enforcement: Adapts to changing roles and permissions, ensuring only authorized content is displayed

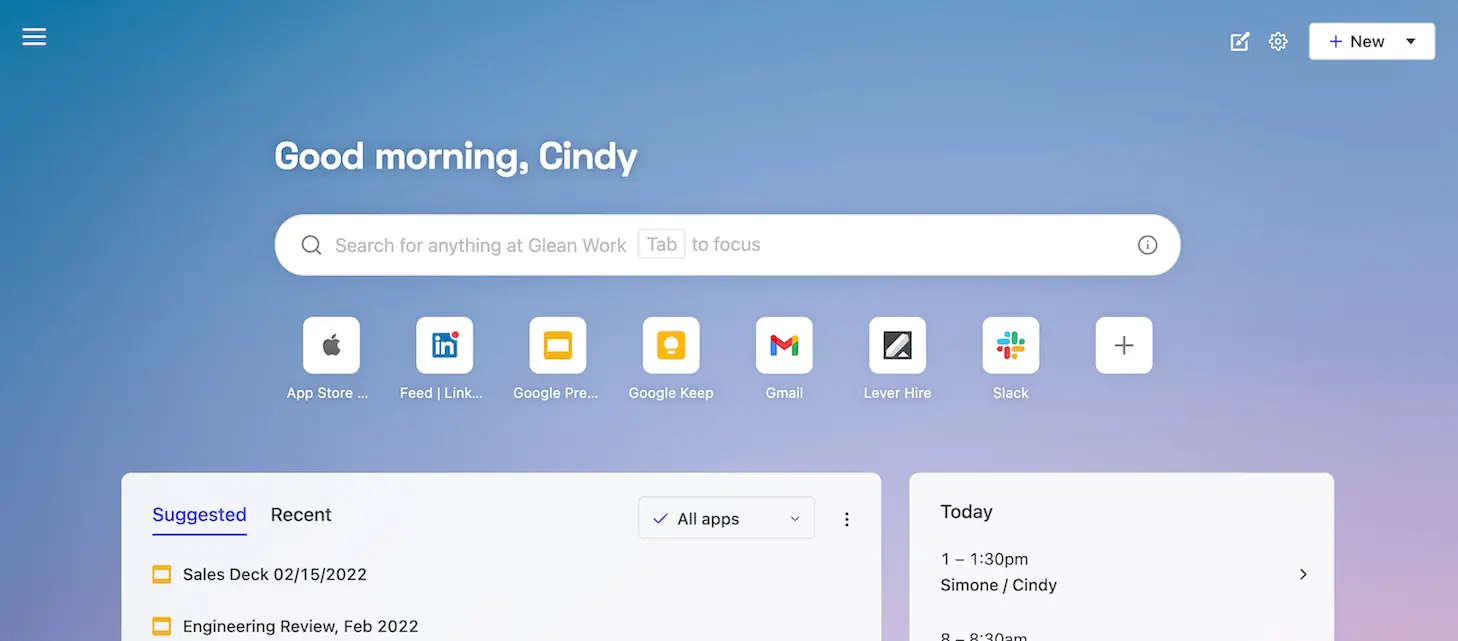

4. Glean Search

Glean Search is an AI-based enterprise search platform that delivers personalized answers across workplace tools. Using deep learning and generative AI, Glean understands the context of every user (including their role, team, and work habits) to surface the most relevant results. Its knowledge graph continuously learns from internal content and interactions.

Key features include:

- Unified search across tools: Searches over 100 integrated apps and systems in real time, consolidating enterprise knowledge into a secure interface

- Contextual deep learning: Uses vector-based semantic search trained on the company’s content, language, and structure for precise results

- Generative AI summaries: Instantly generates document overviews and answers using the most up-to-date data from across connected apps

- Glean Chat: Supports conversational queries and follow-up questions for deeper exploration and insight gathering

- Personalized results: Tailors search output based on role, behavior, and relationships using a company-specific knowledge graph

Related content: Read our guide to enterprise AI search solutions (coming soon)

Considerations for choosing enterprise AI search platforms

Selecting an enterprise AI search platform involves more than evaluating feature checklists. Organizations must align platform capabilities with their operational, security, and scalability requirements. Below are key considerations to guide the selection process:

- Data source coverage: Ensure the platform supports all critical data sources out-of-the-box or through custom connectors. Broad compatibility with structured and unstructured data (from CRMs and cloud storage to internal wikis) is essential for comprehensive search.

- Security and compliance: Evaluate how the platform enforces data access permissions, supports encryption, and complies with regulations such as GDPR, HIPAA, or SOC 2. Look for features like role-based access, audit logging, and identity provider integration.

- Quality of AI and relevance ranking: Assess the effectiveness of the platform’s AI models in understanding natural language, intent, and context. High-quality semantic search, vector embeddings, and feedback loops for relevance tuning are critical for delivering useful results.

- Scalability and performance: The platform should support fast search across large volumes of data and scale with organizational growth. Evaluate performance benchmarks, indexing speed, and support for distributed or hybrid deployments.

- Customization and extensibility: Consider the ability to customize search pipelines, UI, and AI behavior. Availability of APIs, SDKs, and configuration options is important for adapting the platform to specific use cases or enterprise workflows.

- Generative AI capabilities: If generative responses are important, ensure the platform supports RAG or other mechanisms to ground responses in trusted enterprise data. Look for transparency features like citations and summarization controls.

- User experience and adoption: The platform should offer intuitive interfaces, personalization, and integration with daily tools (e.g., Slack, Teams, browsers) to ensure high adoption rates and minimal disruption to user workflows.

- Vendor support and roadmap: Evaluate the vendor’s track record for product updates, support responsiveness, and enterprise readiness. A clear roadmap for AI improvements, integrations, and compliance features is also beneficial.

Powering enterprise AI search with Instaclustr

Instaclustr for OpenSearch offers a robust, fully managed platform that simplifies the deployment and operation of OpenSearch clusters, enabling organizations to build scalable, high-performance search and analytics solutions. With the introduction of AI Search capabilities, Instaclustr has elevated OpenSearch to a next-generation search platform, integrating advanced machine learning-powered features for smarter, more relevant search experiences.

AI search capabilities in OpenSearch

AI Search for OpenSearch, available on the NetApp Instaclustr Managed Platform, transforms traditional keyword-based search into a more intelligent, context-aware system. It leverages semantic, hybrid, and multimodal search techniques to deliver results that understand user intent rather than just matching keywords. Key features include:

- Semantic Search: Enhances relevance by understanding the meaning behind queries, enabling natural language and conversational search experiences.

- Hybrid Search: Combines semantic understanding with traditional keyword search for improved precision and performance.

- Multimodal Search: Supports simultaneous text and image data queries, unlocking richer and more diverse search results.

- Neural Sparse Search: Utilizes lightweight sparse embeddings for cost-effective and faster query processing.

These capabilities are powered by OpenSearch’s AI Search and ML Commons plugins, which enable the generation of vector embeddings, vector indexing, and integration with external large language models (LLMs) like OpenAI or Amazon Bedrock. This allows for advanced use cases such as enterprise data retrieval, intelligent e-commerce search, AI-driven chatbots, and enhanced observability and security analytics.

Managed AI search with Instaclustr

Instaclustr simplifies the adoption of AI Search by managing the operational complexities associated with deploying and scaling AI-powered search solutions. The platform provides:

- Managed operations: Handles provisioning, scaling, patching, and monitoring, ensuring reliability and security.

- Multi-cloud flexibility: Supports deployment on AWS, GCP, Azure, or on-premises with consistent tooling.

- 24/7 expert support: Offers enterprise-grade SLAs and access to OpenSearch specialists.

By integrating AI Search into OpenSearch, Instaclustr enables organizations to move beyond traditional search paradigms, delivering smarter discovery experiences with reduced operational overhead. Whether enhancing product discovery, building AI-assisted applications, or improving enterprise knowledge search, Instaclustr for OpenSearch provides a powerful, open source solution tailored for modern search needs.

For more information: